The ASRock Rack EPYCD8-2T Motherboard Review: From Naples to Rome

by Gavin Bonshor on April 20, 2020 9:00 AM EST- Posted in

- Motherboards

- AMD

- Workstation

- server

- ASRock Rack

- Naples

- Rome

- EPYC 7351P

- EPYCD8-2T

Visual Inspection

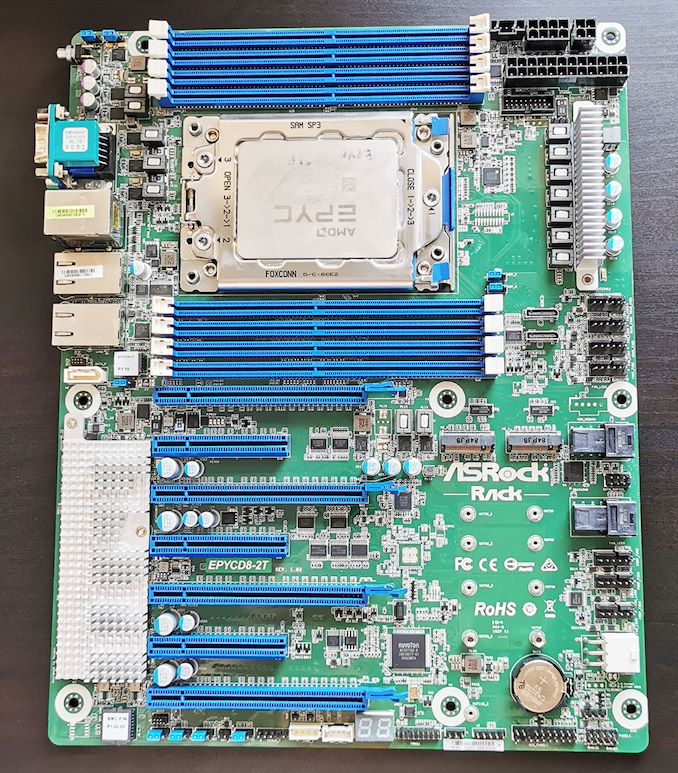

The ASRock Rack EPYCD8-2T is an ATX motherboard which features support for both AMD EPYC 7001 (Naples) and EPYC 7002 (Rome) single-socket processors. The EPYCD8-2T has a single transposed socket to allow for optimal airflow when used within a 1U chassis, but this model is also compatible for use in regular chassis that support ATX motherboards. ASRock Rack is using a green PCB with blue memory and blue PCIe 3.0 slots, with silver aluminium heatsinks for the power delivery and controller set.

Even though the EPYCD8-2T is using an ATX sized PCB, ASRock Rack has managed to fit a total of seven PCIe 3.0 slots, with four full-length PCIe 3.0 x16 slots, and three open ended half-length PCIe 3.0 x8 slots. These slots from top to bottom operate at x16/x8/x16/x8/x16/x8/x16.

For this model to support EPYC 7002 processors, users who end up with one of the earlier runs will need to perform a BIOS update. (Our board was so fresh, it only came with a 16 MB chip, whereas a 32 MB BIOS chip is needed - ASRock informs us all retail units have the 32 MB chip).

For storage, the ASRock Rack EPYCD8-2T has the capability to support up to nine SATA devices. This is possible with two miniSAS HD slots with each slot supporting up to four SATA devices, with a SATA DOM also present on the board. In addition to the SATA are two PCIe 3.0 x4 M.2 slots, which also support SATA M.2 drives. Although the EPYCD8-2T doesn't include U.2 ports directly, it includes two OcLink ports so U.2 drives can be used.

Touching on cooling support, the EPYCD8-2T has a total of seven 6-pin fan headers with one for a CPU fan, and six for chassis fans. Four of the chassis headers are assigned to front fans, and two for rear fans, which signifies official support for users looking to install this board into a 1U chassis. For power, a 24-pin 12 V ATX is present to provide motherboard power, with an 8-pin and 4-pin for CPU power. ASRock Rack also includes a 6-pin connector to provide additional power to the PCIe 3.0 slots.

Along the bottom of the board is a number of headers including a USB 2.0 header which supports two additional ports, a TR1, TPM, BMC_SMB and IPMB headers. A two-digit LED debugger is also present for troubleshooting potential POSTing failures. A large silver aluminium heatsink is located in the bottom left-hand corner for the board which keeps the board's controller set cool This includes the Aspeed AST2500 BMC and Intel X550 dual 10 G Ethernet controller, as well as the Realtek RTL8211E Gigabit controller designated for the boards IPMI.

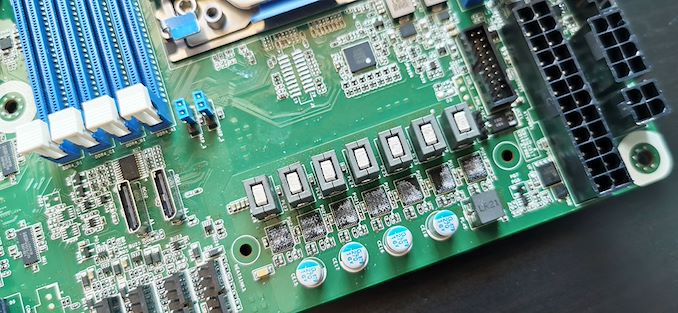

The power delivery on the ASRock Rack EPYCD8-2T is using a basic 7-phase configuration for the CPU. The power delivery does include a small heatsink which uses screws to retain pressure. The EPYCD8-2T has support for up to 64-core EPYC 7002 processors and is more than capable to power such a powerful professional-grade processor.

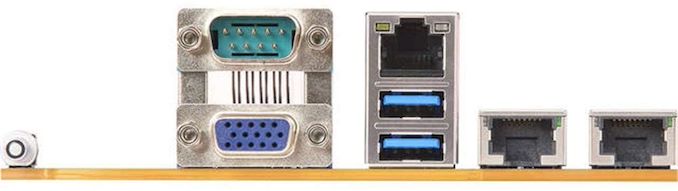

On the rear panel is very little in the way of input and outputs, with just two USB 3.1 G1 Type-A ports present. To enable more, users will need to make use of the single USB 3.1 G1 header which offers two additional ports. A single USB 2.0 header also provides two extra ports bring the boards total of USB support up to six when factoring in the ports on the rear panel. Located above the two USB 3.1 G1 Type-A slots is a Realtek RTL8211E dedicated management Gigabit port, with a further two 10 G Ethernet ports powered by an Intel X550 10 G controller. Also present is a UID button with LED, a D-sub video output powered by the Aspeed AST2500 BMC controller, and a serial port.

What's in the Box

Included in the retail packaging is a small, yet effective accessories bundle is a rear panel I/O shield, a single SATA cable, a mini SAS to four SATA port cable, two M.2 installation screws, and a quick installation guide. ASRock doesn't distinguish between retail packaging and bulk packaging on the official product site.

- SATA cable

- mini SAS to four SATA cable

- 2 x M.2 installation screws

- Quick installation guide

- Rear panel I/O shield

34 Comments

View All Comments

SampsonJackson - Monday, April 20, 2020 - link

That is absolutely incorrect. We do it with Infiniband cards via RDMA and easily saturate multiple 100Gbps cards. Der8auer demonstrated ~28GB/s on a RAID0 using Threadripper 1st gen (~224Gbps) and was only limited by the RAID driver thread saturating a CPU core.. further scaling is possible using the inbox NVMe driver (up to endpoint/bus saturation). Are these realistic workloads? No. Is it possible? No problem.vFunct - Monday, April 20, 2020 - link

CPUs on media servers have been saturating 100G for years now. Netflix is doing that, for example. https://netflixtechblog.com/serving-100-gbps-from-...vFunct - Monday, April 20, 2020 - link

And they're delivering 200gbps now: https://wccftech.com/netflix-evaluating-replacing-...brunis.dk - Monday, April 20, 2020 - link

I think ASSRock should just rename themselves to ASRack for simplicity.kobblestown - Monday, April 20, 2020 - link

What's with the 6-pin fan connectors? Can I plug a regular 4-pin PWM fan into it?dotes12 - Monday, April 20, 2020 - link

I looked up the user manual and yes, it's keyed so that both a normal 3-pin and 4-pin fan will work with the 6-pin motherboard connector without an adapter. It appears that the extra two pins are used for a temperature sensor that's built into the fan. Per the manual, pin 5 is labeled "Sensor" and pin 6 is labeled "NC", and the custom fan speed has an option called "Smart Fan Temp Control" where you can have it increase a specific fan speed based on the temperature the fan is reporting.kobblestown - Monday, April 20, 2020 - link

Oh, that's cool. Thanks for checking it out.cygnus1 - Monday, April 20, 2020 - link

I was originally going to say "WTF are they thinking releasing such a high end AMD board in 2020 that doesn't support PCIe 4.0 when the appropriate CPU is installed. What a waste." But then I realized this board is about a year old already. As others mentioned below, the ROMED8-2T is almost the replacement for this year old board being reviewed. The biggest thing missing from that one is the x16 slots. And for whatever reason they didn't leave the x8 slots open ended to allow for x16 cards to fit.WaltC - Monday, April 20, 2020 - link

This motherboard is a cheap EPYC *server* mboard, and that is all it is...;) Keyword being "cheap"--paring down the system bus to PCIe3.x cuts the system bandwidth in half, compared with 4.0, which translates to manufacturing a lower-cost mboard relative to the layers needed to properly support the signal integrity of a PCIe4.0 system bus. A PCIe3.x system bus also requires less power than PCIex4. It's easy to forget, I suppose, that PCIe4 is *double* the bandwidth of PCIe3. But as a cheap server mboard, PCIe4 may not be a better fit than PCIe3.x.This "review" is a bit strange, imo...;) Not only does it directly compare different mboards, but it also compares those mboards running different CPUs, as well, as if to illustrate some obscure point. I would have done things a bit differently, like, for instance, restricting my choice of motherboards to those server boards capable of running this CPU--and *actually running* the EPYC CPU featured here...;) Maybe throw in a couple of system bandwidth tests and applications to illustrate advantages of the increased bandwidth PCIe4x brings to the table, along with extra costs, etc. Otherwise, what one winds up comparing are CPUs instead of motherboards, imo. As server mboards go, this one is not "high end" at all--it's actually a "budget" server mboard, imo--hence the compromises with system bus bandwidth, etc. Simply put, this mboard was not designed to "compete" with "enthusiast-class" retail mboards used for gaming--as should be obvious. People looking for budget-class server motherboards for EPYC-class cpus won't care about PCIe4, the "colors" used, RGB, multi-GPUs, etc. Those things add to cost and energy consumption, and, of course, superficial color schemes/RGB offer no power efficiency or performance enhancements of any kind.

mjz_5 - Monday, April 20, 2020 - link

I hope it’s running the enterprise version of Windows 10 because that has better performance for high core count computers.