Arm's New Cortex-A77 CPU Micro-architecture: Evolving Performance

by Andrei Frumusanu on May 27, 2019 12:01 AM ESTThe Cortex-A77 µarch: Going For A 6-Wide* Front-End

The Cortex-A76 represented a clean-sheet design in terms of its microarchitecture, with Arm implementing from scratch the knowledge and lessons of years of CPU design. This allowed the company to design a new core that was forward-thinking in terms of its microarchitecture. The A76 was meant to serve as the baseline for the next two designs from the Austin family, today’s new Cortex-A77 as well next year’s “Hercules” design.

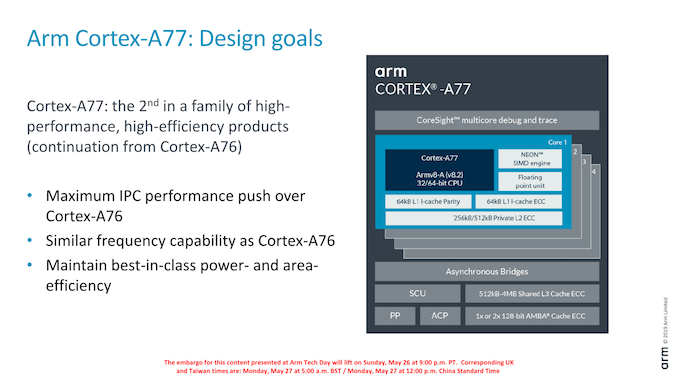

The A77 pushes new features with the primary goals of increasing the IPC of the microarchitecture. Arm’s goals this generation is a continuation of focusing on delivering the best PPA in the industry, meaning the designers were aiming to increase the performance of the core while maintaining the excellent energy efficiency and area size characteristics of the A76 core.

In terms of frequency capability, the new core remains in the same frequency range as the A76, with Arm targeting 3GHz peak frequencies in optimal implementations.

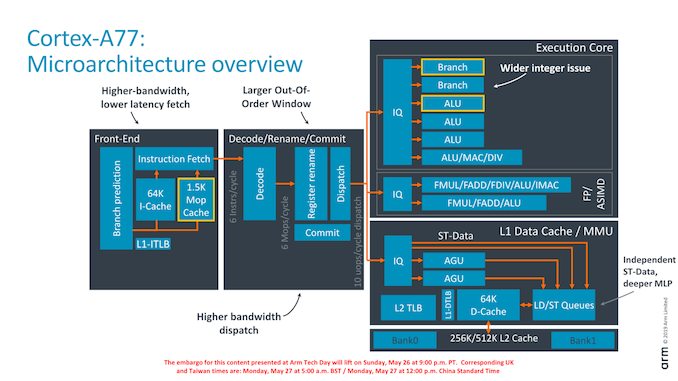

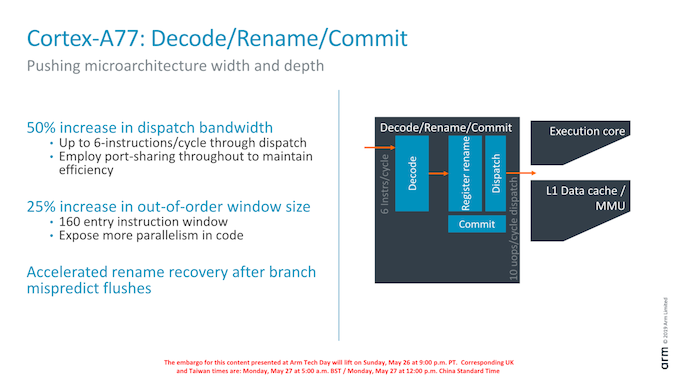

As an overview of the microarchitectural changes, Arm has touched almost every part of the core. Starting from the front-end we’re seeing a higher fetch bandwidth with a doubling of the branch predictor capability, a new macro-OP cache structure acting as an L0 instruction cache, a wider middle core with a 50% increase in decoder width, a new integer ALU pipeline and revamped load/store queues and issue capability.

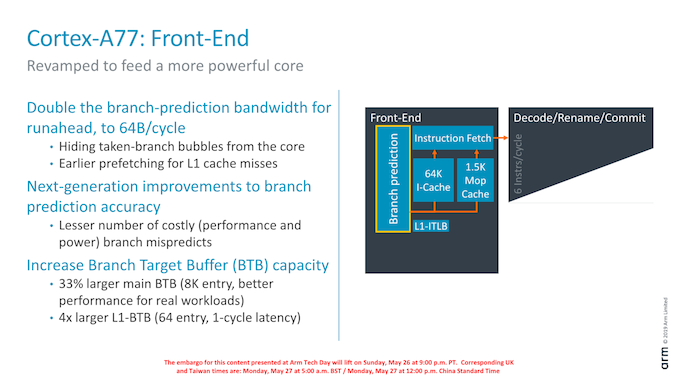

Dwelling deeper into the front-end, a major change in the branch predictor was that its runahead bandwidth has doubled from 32B/cycle to 64B/cycle. Reason for this increase was in general the wider and more capable front-end, and the branch predictor’s speed needed to be improved in order to keep up with feeding the middle-core sufficiently. Arm instructions are 32bits wide (16b for Thumb), so it means the branch predictor can fetch up to 16 instructions per cycle. This is a higher bandwidth (2.6x) than the decoder width in the middle core, and the reason for this imbalance is to allow the front-end to as quickly as possible catch up whenever there are branch bubbles in the core.

The branch predictor’s design has also changed, lowering branch mispredicts and increasing its accuracy. Although the A76 already had the a very large Branch Target Buffer capacity with 6K entries, Arm has increased this again by 33% to 8K entries with the new generation design. Seemingly Arm has dropped a BTB hierarchy: The A76 had a 16-entry nanoBTB and a 64-entry microBTB – on the A77 this looks to have been replaced by a 64-entry L1 BTB that is 1 cycle in latency.

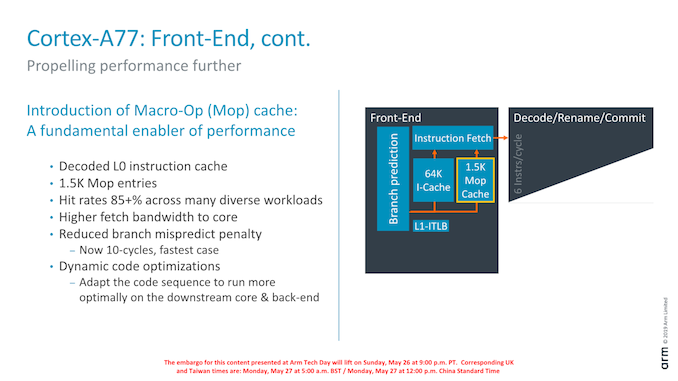

Another major feature of the new front-end is the introduction of a Macro-Op cache structure. For readers familiar with AMD and Intel’s x86 processor cores, this might sound familiar and akin to the µOP/MOP cache structures in those cores, and indeed one would be correct in assuming they have the similar functions.

In effect, the new Macro-OP cache serves as a L0 instruction cache, containing already decoded and fused instructions (macro-ops). In the A77’s case the structure is 1.5K entries big, which if one would assume macro-ops having a similar 32-bit density as Arm instructions, would equate to about 6KB.

The peculiarity of Arm’s implementation of the cache is that it’s deeply integrated with the middle-core. The cache is filled after the decode stage (in a decoupled manner) after instruction fusion and optimisations. In case of a cache-hit, then the front-end directly feeds from the macro-op cache into the rename stage of the middle-core, shaving off a cycle of the effective pipeline depth of the core. What this means is that the core’s branch mispredicts latency has been reduced from 11 cycles down to 10 cycles, even though it has the frequency capability of a 13 cycle design (+1 decode, +1 branch/fetch overlap, +1 dispatch/issue overlap). While we don’t have current direct new figures of newer cores, Arm’s figure here is outstandingly good as other cores have significantly worse mispredicts penalties (Samsung M3, Zen1, Skylake: ~16 cycles).

Arm’s rationale for going with a 1.5K entry cache size is that they were aiming for an 85% hit-rate across their test suite workloads. Having less capacity would take reduce the hit-rate more significantly, while going for a larger cache would have diminishing returns. Against a 64KB L1 cache the 1.5K MOP cache is about half the area in size.

What the MOP cache also allows is for a higher bandwidth to the middle-core. The structure is able to feed the rename stage with 64B/cycle – again significantly higher than the rename/dispatch capacity of the core, and again this imbalance with a more “fat” front-end bandwidth allows the core to hide to quickly hide branch bubbles and pipeline flushes.

Arm talked a bit about “dynamic code optimisations”: Here the core will rearrange operations to better suit the back-end execution pipelines. It’s to be noted that “dynamic” here doesn’t mean it’s actually programmable in what it does (Akin to Nvidia’s Denver code translations), the logic is fixed to the design of the core.

Finally getting to the middle-core, we see a big uplift in the bandwidth of the core. Arm has increased the decoder width from 4-wide to 6-wide.

Correction: The Cortex A77’s decoder remains at 4-wide. The increased middle-core width lies solely at the rename stage and afterwards; the core still fetches 6 instructions, however this bandwidth only happens in case of a MOP-cache hit which then bypasses the decode stage. In MOP-cache miss-cases, the limiting factor is still the decoder which remains at 4 instructions per cycle.

The increased width also warranted an increase of the reorder buffer of the core which has gone from 128 to 160 entries. It’s to be noted that such a change was already present in Qualcomm’s variant of the Cortex-A76 although we were never able to confirm the exact size employed. As Arm was still in charge of making the RTL changes, it wouldn’t surprise me if was the exact same 160 entry ROB.

108 Comments

View All Comments

Retycint - Wednesday, May 29, 2019 - link

This isn't 2012 anymore. A 30% better performance (for instance) isn't going to lead to any real world differences, especially given the fact that most consumers use their phones as a camera/social media machinejackthepumpkinking6sic6 - Thursday, May 30, 2019 - link

How foolish to actually sit there and act as if that's the only option. First of not only are those not the only high end option but they clearly said lower cost. Meaning any segment. Even mid and low range. Use your brain before commenting.Not to mention that despite being similarly priced and having insignificantly different benchmark scores those devices are overall better and more worthy the price.... Though none are worthy of such prices. Just some are more worth it than others.

alysdexia - Monday, December 30, 2019 - link

performance -> speedAnandtech never explain how they get their power figures; I saw one mention of regression testing under the iPhone XS review but still no work. The figures look more like shared power or peak power than average CPU power as they conflict with general runtime or battery drain tests which suggest 2 watts sustained; I recently took Notebookcheck's loads of power figures to revise my list of the thriftiest CPUs where I found the equivalent TDP somewhere between the load and idles and implied screen, GPU, and memory powers; another way to estimate is to subtract the nonCPU from the power adapter rating which for iPhones is 5W, screen 1W to 2W. I had to throw out Anandtech's SPEC2006 powers.

Androids do not get thriftier chips; iPhones idle better than the average (Use the comparison tool under any Notebookcheck review) and their huge cache seems to save power. (iPhone 11 has 33MB vs. S10 5MB. This makes A13 over 4fold as good as 9820.)

W CPU/(CPU+GPU): select core–unit CPUmark [mobile/60] (Gn/s) {Mp/s/10; LZMA-D Mp/s/10}, Geekbench 5, UserBenchmark Int 2019 Dec: /W, /$

~1·2: Cortex-A77 A13, [11145] (29·3), 1330–3422, : [~9288], ; , ; , Lightning-Thunder

~2: Cortex-A76 A12X, [12591] (45), 1114–4608, : [~6296], ; ~2304, ; , Vortex-Tempest

~1·7: Cortex-A76 A12, [8006] (27), 1111–2869, : [~4709], ; ~1688, ; , Vortex-Tempest

~1·5: Cortex-A73 A10X, [6475] (19·5), 832–2274, : [~4317], ; ~1516, ; , Hurricane-Zephyr

~2: Cortex-A75 A11, [7267] (26·3), 919–2372, : [~3634], ; ~1186, ; , Monsoon-Mistral

~2: Cortex-A76-A55 485, [4429], 767–2715, : [~2214], ; ~1358, ; , Kryo

·003: Cortex-M0+ 1.8V 64MHz, {9}, , : {2870}, ; , ; ,

~2: Cortex-A75-A55-M4 9820, [4298], 762–2148, : [~2149], ; ~1074, ; , Exynos

~2: Cortex-A76-A55 990, [4078], 761–2861, : [~2039], ; ~1431, ; , Kirin

~2·2: Cortex-A73 A10, [4748] (12·6), 744–1333, : [~1976], ; ~606, ; , Hurricane-Zephyr

·012: M14K, {29}, , : {2500}, ; , ; ,

1·7: Cortex-A73-A53 280, [<3031] (), 387–1448, : [<1783], ; 852, ; , Kryo

2? ~(40/53): Cortex-A53 625, [2604], 260–773, : [1725?], ; 387?, ; , Apollo

2·5: Cortex-A75-A55 385, [3940] (), 514–2191, : [1576], ; 876, ; , Kryo

~2: Cortex-A53-M2 8895, [>3031] (), 373–1497, : [>1516], ; ~749, ; , Exynos

~2: Cortex-A57-A53 A9X, [3000] (10·5), 3097–5284, : [~1500], ; , ; , Twister

~1·5: Cortex-A53-A57 A9, [2200] (12·7), , : [~1467], ; , ; , Twister

·001: Cortex-M0+ SAM L21 12MHz, {2}, : {1929}, ; , ; ,

·0033: Cortex-M0 1.8V 50MHz, {6}, , : {1920}, ; , ; ,

·09: SH-X4, {172}, , : {1856}, ; , ; , SH-X4

·000825: Cortex-M4 STM32L412xx 8MHz, {15}, , : {1852}, ; , ; ,

·013: Cortex-M3, {24}, , : {1790}, ; , ; ,

·15: 24K, {235}, , : {1600}, ; , ; ,

~1·8: Cortex-A57-A53 A8X, [2032] (10·6), , : [~1129], ; , ; , Typhoon

·15: 34K, {232}, , : {1455}, ; , ; ,

alysdexia - Monday, December 30, 2019 - link

dammit can't edit:15 ~3/5: i5-1035G7, : ~, Ice Lake

15 ~11/14: i7-10710U, 13107: ~1112, 30 Comet Lake

15 ~16/19: i5-10210U, : ~, Comet Lake

Findecanor - Monday, May 27, 2019 - link

I wonder what the difference would be if ARM removed AArch32 support like Apple did.RSAUser - Tuesday, May 28, 2019 - link

We might see it with next gen as Google is dropping 32bit app support on the Play Store. If there is a performance advantage/cost or power saving, they'll probably implement it.beginner99 - Tuesday, May 28, 2019 - link

However with android you also get the performance at half the price. What these charts don't show is the actual size of the chip and apple SOCs are large and hence more expensive.michael2k - Thursday, May 30, 2019 - link

ARM isn't competing with Apple and doesn't need to compete with Apple.ARM licenses out it's implementation to those who also cannot compete with Apple.

You get the benefit, as compensation for lower performance, of a cheaper phone.

If you really want something that is as high performance you're going to have to buy from a company willing to invest the money into designing such a CPU, and that requires a different budget than licensing ARM's SoC/CPU designs.

Meteor2 - Monday, June 3, 2019 - link

ARM's cores matched Apple for efficiency with A76 (a breakthrough design), alongside sufficient performance.With large increases with IPC per generation we're seeing from ARM, I don't think it will be long before the absolute performance gap is closed either -- if SoC manufacturers choose to equal Apple's peak power draw. They may well not, and nobody will mind.

markiz - Tuesday, June 4, 2019 - link

Out of curiosity, what is it that you want to do on your phone that you feel is too slow on e.g. S855 phone?