The TeamGroup L5 LITE 3D (480GB) SATA SSD Review: Entry-Level Price With Mainstream Performance

by Billy Tallis on September 20, 2019 9:00 AM EST- Posted in

- SSDs

- SATA

- Silicon Motion

- SM2258

- TeamGroup

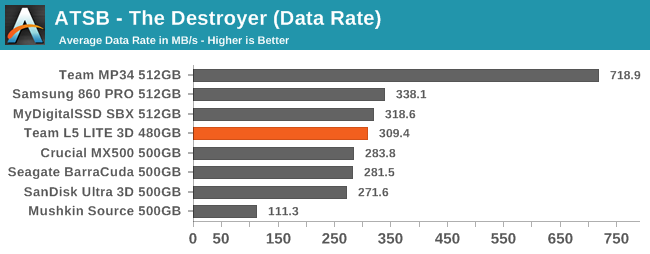

AnandTech Storage Bench - The Destroyer

The Destroyer is an extremely long test replicating the access patterns of very IO-intensive desktop usage. A detailed breakdown can be found in this article. Like real-world usage, the drives do get the occasional break that allows for some background garbage collection and flushing caches, but those idle times are limited to 25ms so that it doesn't take all week to run the test. These AnandTech Storage Bench (ATSB) tests do not involve running the actual applications that generated the workloads, so the scores are relatively insensitive to changes in CPU performance and RAM from our new testbed, but the jump to a newer version of Windows and the newer storage drivers can have an impact.

We quantify performance on this test by reporting the drive's average data throughput, the average latency of the I/O operations, and the total energy used by the drive over the course of the test.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

Any expectations that the TeamGroup L5 LITE 3D would perform like an entry-level SSD are shattered by the results from The Destroyer. The L5 LITE 3D has about the best overall data rate that can be expected from a TLC SATA drive. The latency scores are generally competitive with other mainstream TLC SATA drives and unmistakably better than the DRAMless Mushkin Source. Even the energy efficiency is good, though not quite able to match the Samsung 860 PRO.

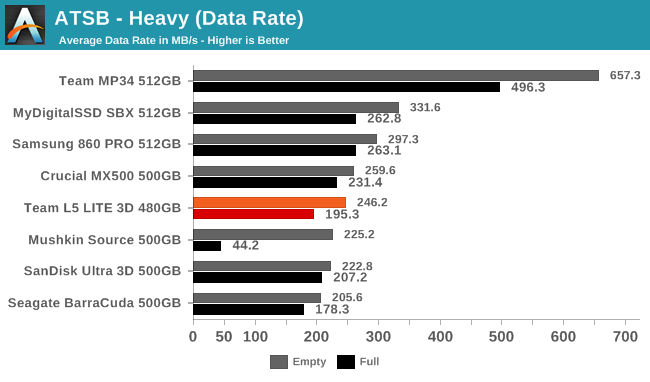

AnandTech Storage Bench - Heavy

Our Heavy storage benchmark is proportionally more write-heavy than The Destroyer, but much shorter overall. The total writes in the Heavy test aren't enough to fill the drive, so performance never drops down to steady state. This test is far more representative of a power user's day to day usage, and is heavily influenced by the drive's peak performance. The Heavy workload test details can be found here. This test is run twice, once on a freshly erased drive and once after filling the drive with sequential writes.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

On the Heavy test, the L5 LITE 3D starts to show a few weaknesses, particularly with its full-drive performance—latency clearly spikes and overall throughput drops more than for most mainstream TLC drives. The effect is vastly smaller than the full-drive penalty suffered by the DRAMless competitor. The energy efficiency doesn't stand out.

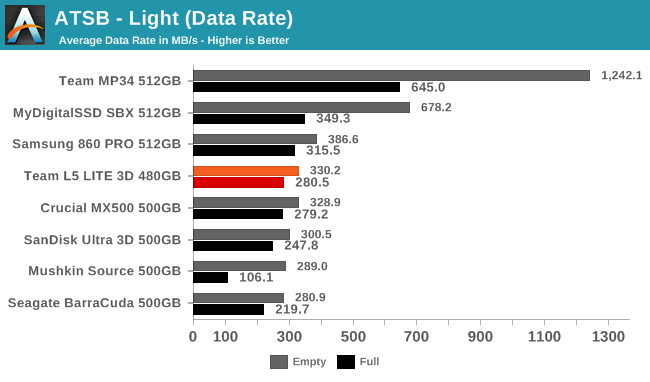

AnandTech Storage Bench - Light

Our Light storage test has relatively more sequential accesses and lower queue depths than The Destroyer or the Heavy test, and it's by far the shortest test overall. It's based largely on applications that aren't highly dependent on storage performance, so this is a test more of application launch times and file load times. This test can be seen as the sum of all the little delays in daily usage, but with the idle times trimmed to 25ms it takes less than half an hour to run. Details of the Light test can be found here. As with the ATSB Heavy test, this test is run with the drive both freshly erased and empty, and after filling the drive with sequential writes.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

The Team L5 LITE 3D has basically the same overall performance on the Light test as drives like the Crucial MX500. A handful of the latency scores are a bit on the high side, but don't really stand out—the Seagate BarraCuda that uses the old Phison S10 controller with current 3D TLC has more trouble on the latency front, and of course the DRAMless Mushkin Source has by far the worst full-drive behavior. There is a bit of room for improvement on the L5 LITE 3D's energy efficiency, since both the Mushkin Source and Crucial MX500 are clearly better for the empty-drive test runs. The Team drive's efficiency isn't anything to complain about, though.

42 Comments

View All Comments

jabber - Saturday, September 21, 2019 - link

Always be wary of 1 Star tech reviews on Amazon. 60% of them are usually disgruntled "Doesn't work on Mac!" reviews.flyingpants265 - Saturday, September 21, 2019 - link

"They're a biased sample, as very happy and very unhappy people tend to self-report the most. Which doesn't mean what you state is untrue, but it's not something we can corroborate."Ryan, that doesn't explain why one model/brand can have 27% 1-star reviews, and another has 7%.... Unless you think Team Group customers are SEVERAL TIMES MORE outspoken than Crucial/Samsung/whatever customers for some reason. You can't ignore those reports. Ofc the product doesn't have a 27% failure rate, but it's likely much higher than competing products.

Korguz - Saturday, September 21, 2019 - link

ever consider that maybe the bad reviews are either fake, or made up reviews with the person not actually owning, or even bought the product ?flyingpants265 - Monday, September 23, 2019 - link

...Then you'd have to explain why ONLY TEAM GROUP SSDs have tons of fake 1-star reviews, and other SSDs don't. Seems Anandtech commenters are not that bright..Korguz - Sunday, September 29, 2019 - link

maybe one person who bought one, it failed, then to try yo get even, created more then one account ? unless you can PROVE these supposed 1 star reviews are real reviews, then i guess you are not that bright as well... cant really prove your point, so you resort to insults.. grow upKraszmyl - Friday, September 20, 2019 - link

I have nearly a thousand of thier drives from 128g to 480g and so far no failures. Also yes cheap products have poor support, that's one of the reasons why they are cheaper.Samus - Saturday, September 21, 2019 - link

I'd err on the side of caution when dealing with Team Group, though. Failed memory (which I've seen plenty of over the years) is one thing, but failed data storage is a lot more catastrophic. I can't believe I'm saying this but I'd feel safer with an ADATA SSD than a Team Group SSD...and I've seen a number of ADATA's fail, though none recently (in the last few years)bananaforscale - Saturday, September 21, 2019 - link

It's your responsibility to make backups. Never allow a single point of failure.philehidiot - Saturday, September 21, 2019 - link

Aye, especially with SSDs where data recovery is harder than with a HDD. Personally, I pop critical data on two local SSDs and then a memory stick, phone or other system. I don't like the cloud as it is at the mercy of the Internet or another company and I've had access issues which have denied me access to data or, weirdly, only given me access to months old versions. So I prefer a dual local backup, so if a drive fails I can just switch to the backup immediately, and also another copy which is not linked to the same system in case of some catastrophic PSU weirdness that takes out other components (happened once a long time ago and with a cheap PSU) or malware attacks. If I was getting a cheap SSD with a reduced warranty, knowing it uses whatever NAND is cheap at the time, I'd not be using that in a critical system without adequate redundancy (RAID, most likely). You pays your money and takes your choice but if you buy cheap, ensure you're protected... And if you buy expensive.... Ditto.evernessince - Saturday, September 21, 2019 - link

Technically speaking the failure rates should be no higher then other manufacturers, after all they are using the same NAND and controllers as everyone else. That said there is something to be said for poor customer service. I don't know how they are getting that many 1 star reviews though, not unless they are just rebranding B stock.Also, you shouldn't trust only one source for reviews and you should always look at who is posting the bad reviews. For example, this guy seems to be the exact same guy who posted on Newegg as well

https://www.amazon.com/gp/profile/amzn1.account.AF...

He seems to leave a lot of bad reviews and often times does not do a good job explaining why. Judging by their English usage, I'd also say they are not a native speaker. There are plenty of companies in China they also pay people to go out and write both good and bad reviews for competing products which makes research on reviewers all the more important.

I'm not saying they don't deserve their rating, I'm just saying you should always check not just the reviews but the reviewers as well. 2 sources minimum as well. It's a PITA but there are so many fake reviews out there (especially on Amazon) that it's required if you want to get what you paid for.