Imagination Announces A-Series GPU Architecture: "Most Important Launch in 15 Years"

by Andrei Frumusanu on December 2, 2019 8:00 PM ESTNew ISA & ALUs: An Extremely Wide Architecture

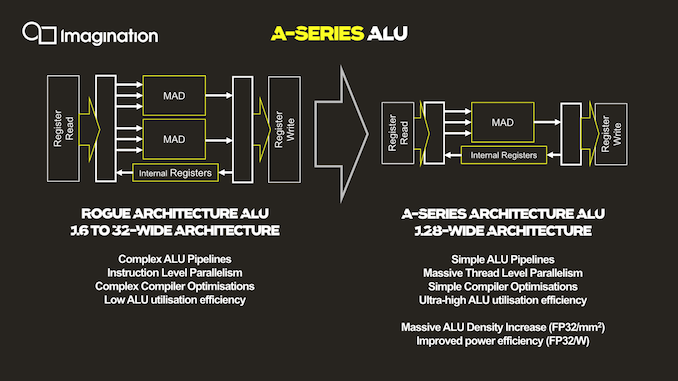

As mentioned, the ALU architecture as well as ISA of the new A-Series is fundamentally different to past Imagination GPUs, and in fact is very different from any other publicly disclosed design.

The key characteristic of the new ALU design is the fact that it’s now significantly wider than what was employed on the Rogue and Furian architectures, going to up a width of 128 execution units per cluster.

For context, the Rogue architecture used 32 thread wide wavefronts, but a single SIMD was only 16 slots wide. As a result, Rogue required two cycles to completely execute a 32-wide wavefront. This was physically widened to 32-wide SIMDs in the 8XT Furian series, executing a 32-wide wavefront in a single cycle, and was again increased to 40-wide SIMDs in the 9XTP series.

In terms of competing architectures, NVIDIA’s desktop GPUs have been 32-wide for several generations now, while AMD more recently moved from a 4x16 ALU configuration with a 64-wide wavefront to native 32-wide SIMDs and waves (with the backwards compatibility option to cluster together two ALU clusters/CUs for a 64-wide wavefront).

More relevant to Imagination’s mobile market, Arm’s recent GPU releases also have increased the width of their SIMDs, with the data paths increasing from 4 units in the G72, to 2x4 units in the G76 (8-wide wave / warp), to finally a bigger more contemporary 16-wide design with matching wavefront in the upcoming Mali-G77.

So immediately one might see Imagination’s new A-Series GPU significantly standing out from the crowd in terms of its core ALU architecture, having the widest SIMD design that we know of.

All of that said, we're a bit surprised to see Imagination use such a wide design. The problem with very wide SIMD designs is that you have to bundle together a very large number of threads in order to keep all of the hardware's execution units busy. To solve this conundrum, a key design change of the A-Series is the vast simplification of the ISA and the ALUs themselves.

Compared to the Rogue architecture as depicted in the slides, the new A-Series simplifies a execution unit from two Multiply-Add (MADD) units to only a single MADD unit. This change was actually effected in the Series-8 and Series-9 Furian architectures, however those designs still kept a secondary MUL unit alongside the MADD, which the A-Series now also does without.

The slide’s depiction of three arrows going into the MADD unit represents the three register sources for an operation, two for the multiply, and one for the addition. This is a change and an additional multiply register source compared to the Furian architecture’s MADD unit ISA.

In essence, Imagination has doubled-down on the transition from an Instruction Level Parallelism (ILP) oriented design to maximizing Thread Level Parallelism(TLP). In this respect it's quite similar to what AMD did with their GCN architecture early this decade, where they went from an ILP-heavy design to an architecture almost entirely bound by TLP.

The shift to “massive” TLP along with the much higher ALU utilization due to the simplified instructions is said to have enormously improved the density of the individual ALUs, with “massive” increases in performance/mm². Naturally, reduced area as well as elimination of redundant transistors also brings with itself an increase in power efficiency.

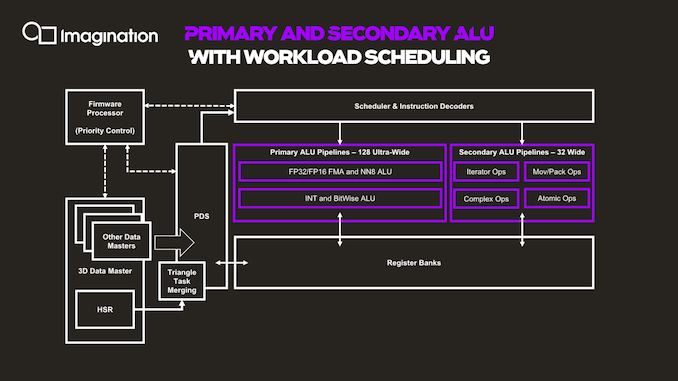

The next graphic describes the data and execution flow in the shader processor.

Things start off with a data master which kicks off work based on command queues in the memory. The 3D data master here also handles other fixed-function pre-processing, which will trigger execution of per-tile hidden surface removal and workload generation for the shader programs. The GPU here has a notion of triangle merging which groups them together into tasks in order to get better utilization of the ALUs and able to fill the 128 slots of the wavefront.

The PDS (Programmable Data Sequencer) is an allocator for resources and manager. It reserves register space for workloads and manages tasks as they’re being allocated to thread slots. The PDS is able to prefetch/preload data to local memory for upcoming threads, upon availability of the data of a thread, this becomes an active slot and is dispatched and decoded to the execution units by the instruction scheduler and decoder.

Besides the primary ALU pipeline we described earlier, there’s a secondary ALU as well. First off, a clarification on the primary ALUs is that we also find a separate execution unit for integer and bitwise operations. These units, while separate in their execution, do share the same data paths with the floating-point units, so it’s only ever possible to use one or the other. These integer units are what enable the A-Series to have high AI compute capabilities, having quad-rate INT8 throughput. In a sense, this is very similar to Arm’s NN abilities on the G76 and G77 for integer dot-product instructions, although Imagination doesn’t go into much detail on what exactly is possible.

The secondary pipeline runs at quarter rate speed, thus executing 32 threads per cycle in parallel. Here we find the more complex instructions which are more optimally executed on dedicated units, such as transcendentals, varying operations and iterators, data conversions, data moving ops as well as atomic operations.

143 Comments

View All Comments

mode_13h - Tuesday, December 3, 2019 - link

> after over 20 years it's no longer a brand in and of itselfOnly 20 years? Pfft.

After 55 years Ford's Mustang is still around, and it's now an electric SUV.

And long after x86 is a thing of the past, you'd better believe Intel will *still* be using the Pentium branding for at least some of their CPUs.

Goshi112112 - Tuesday, December 3, 2019 - link

Goodmode_13h - Wednesday, December 4, 2019 - link

The idea of a super-wide SIMD seems somewhat at odds with tiled-rendering. Unless you can scale up your tile sizes (which might be how they got away with it), it seems that it'd be difficult to pack your 128-lane SIMD with conditionally-coherent threads, if you're also limiting the parallelism with a spatial coherency constraint.lucam - Wednesday, December 4, 2019 - link

I believe IMG has, also, proposed good solutions in the past. Problem was they never got to market as they never been licensed. We only have seen some low-midrange solution in some MediaTek SoC that never shined and nobody even bothered.Now the main question still remains, will IMG be able to license high end solutions to third parties in order to put our hands on?

Otherwise it still will be another paper show off and nothing more...I am afraid...: 😦

mode_13h - Wednesday, December 4, 2019 - link

This is not a new problem for chip (or IP) companies. The job of a good sales & marketing team is to engage with potential customers and figure out what specs their product would need to have to potentially win their business.Of course, whatever the competition & end-user markets do are wildcards you can't control.

vladx - Wednesday, December 4, 2019 - link

I wouldn't consider the Helio P90 as low-midrange, in fact it's close to a Snapdragon 730 performance-wise.lucam - Wednesday, December 4, 2019 - link

Indeed...but we only have seen this just few months ago in the market and it's not even the Furian version. The only chips have seen around have the g8320 ...come on...they really are low..low...low range. I wished to have seen some 9XT around but it didn't happen and perhaps never will. Now look forward to seeing this new A series....but my doubts still remain...I hope to be wrong..nvmnghia - Saturday, December 7, 2019 - link

So today's smartphones have these for AI:- DSP

- "neural engine"

- CPU (is there an instruction/separate die area for this?)

- GPU

mpbello - Monday, December 9, 2019 - link

Are they going to offer open source drivers for this new series?peevee - Monday, December 9, 2019 - link

Why do you call wider vectors "thread-level parallelism"? Seems the opposite of the meaning of threads as threads must be able to execute different pieces of code.