Workstation Graphics: AGP Cross Section 2004

by Derek Wilson on December 23, 2004 4:14 PM EST- Posted in

- GPUs

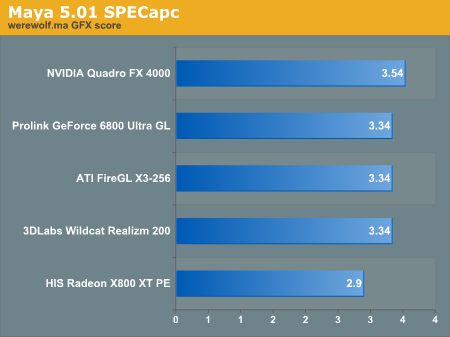

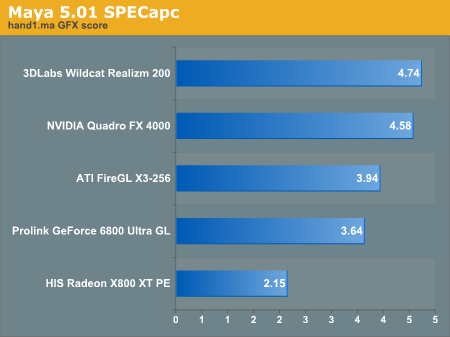

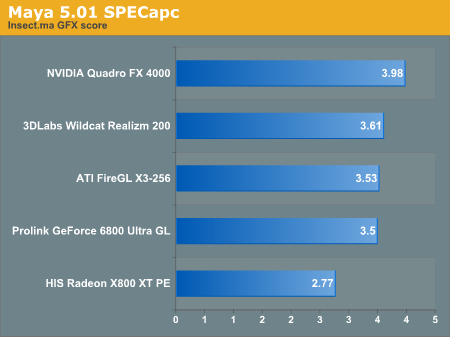

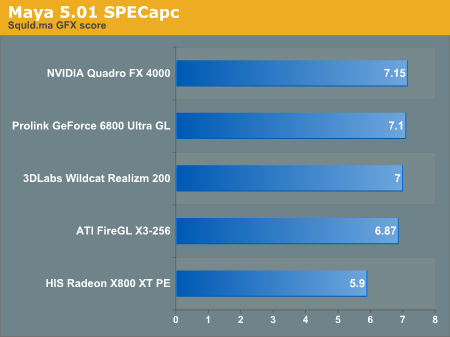

SPECapc Maya 5.01 Performance

The NVIDIA Quadro FX 4000 comes out on top once again in the Maya SPECapc test. The Realizm 200 is able to best the Quadro in the hand1.ma test, but all other tests go in favor of NVIDIA's parts.Looking deeper into the Maya tests (which we have not listed here), we were able to see that the Quadro and Realizm perform just about dead evenly in smooth shaded operation under the Maya 5.0 SPECapc. Wireframe operation with both GPUs is also evenly matched in everything, but the Insect.ma test (which NVIDIA led).

The performance factor that pushed everything over the top seems to be the way that NVIDIA is able to handle the selected and hilted modes in Maya. The Quadro FX 4000 was able to beat its competitors every time in these tests.

25 Comments

View All Comments

Sword - Friday, December 24, 2004 - link

Hi again,I want to add to my first post that there were 2 parts and a complex assembly (>110 very complex parts without simplified rep).

The amount of data to process was pretty high (XP shows >400 Mb and it can goes up to 600 Mb).

About the specific features, I believe that most of the CAD users do not use them. People like me, mechanical engineers and other engineers, are using the software like Pro/E, UGS, Solidworks, Inventor and Catia for solid modeling without any textures or special effects.

My comment was really to point that the high end features seams useless in real world application for engineering.

I still believe that for 3D multimedia content, there is place for high-end workstation and the specviewperf benchmark is a good tool for that.

Dubb - Friday, December 24, 2004 - link

how about throwing in soft-quadro'd cards? when people realize with a little effort they can take a $350 6800GT to near-q4000 performance, that changes the pricing issue a bit.Slaimus - Friday, December 24, 2004 - link

If the Realizm 200 performs this well, it will be scary to see the 800 in action.DerekWilson - Friday, December 24, 2004 - link

dvinnen, workstation cards are higher margin -- selling consumer parts may be higher volume, but the competition is harder as well. Creative would have to really change their business model if they wanted to sell consumer parts.Sword, like we mentioned, the size of the data set tested has a large impact on performance in our tests. Also, Draven31 is correct -- a lot depends on the specific features that you end up using during your normal work day.

Draven31, 3dlabs drivers have improved greatly with the Realizm from what we've seen in the past. In fact, the Realizm does a much better job of video overlay playback as well.

Since one feature of the Quadro and Realizm cards is their ability to run genlock/framelock video walls, perhaps a video playback/editing test would make a good addition to our benchmark suite

Draven31 - Friday, December 24, 2004 - link

Coming up with the difference between the spec viewperf tests and real-world 3d work means finding out which "high-end card' features that the test is using and then turning them off in the tests. With NVidia cards, this usually starts with antialiased lines. It also depends on whether the application you are running even uses these features... in Lightwave3D, the 'pro' cards and the consumer cards are very comparable performance-wise because it doesn't use these so-called 'high-end' features very extensively.And while they may be faster in some Viewperf tests, 3dLabs drivers generally suck. Having owned and/or used several, I can tell you any app that uses DirectX overlays as part of its display routines is going to either be slow or not work at all. For actual application use, 3dLabs cards are useless. I've seen 3dLabs cards choke on directX apps, and that includes both games and applications that do windowed video playback on the desktop (for instance, video editing and compositing apps)

Sword - Thursday, December 23, 2004 - link

Hi everyone,I am a mechanical engineer in Canada and I am a fan of anandtech.

I made last year a very big comparison of mainstream vs workstation video card for our internal use (the company I work for).

The goal was to compare the different systems (and mainly video cards) to see if in Pro-Engineer and the kind of work with do we could take real advantage of high-end workstation video card.

My conclusion is very clear : in specviewperf there is a huge difference between mainstream video card and workstation video card. BUT, in the day-to-day work, there is no real difference in our reaults.

To summarize, I made a benchmark in Pro/E using the trail files with 3 of our most complex parts. I made comparison in shading, wireframe, hidden line and I also verified the regeneration time for each part. The benchmark was almost 1 hour long. I compared 3D Labs product, ATI professionnal, Nvidia professionnal and Nvidia mainstream.

My point is : do not believe specviewperf !! Make your own comparison with your actual day-to-day work to see if you really have to spend 1000 $ per video cards. Also, take the time to choose the right components so you minimize the calculation time.

If anyone at Anandtech is willing to take a look at my study, I am willing to share the results.

Thank you

dvinnen - Thursday, December 23, 2004 - link

I always wondered why Creative (they own 3dLabs) never made a consumer edition of the Wildcat. Seems like a smallish market when it wouldn't be all that hard to expand into consumer cards.Cygni - Thursday, December 23, 2004 - link

Im surprised by the power of the Wildcat, really... great for the dollar.DerekWilson - Thursday, December 23, 2004 - link

mattsaccount,glad we could help out with that :-)

there have been some reports of people getting consumer level driver to install on workstatoin class parts, which should give better performance numbers for the ATI and NVIDIA parts under games if possible. But keep in mind that the trend in workstation parts is to clock them at lower speeds than the current highest end consumer level products for heat and stability reasons.

if you're a gamer who's insane about performance, you'd be much better off paying $800 on ebay for the ultra rare uberclocked parts from ATI and NVIDIA than going out and getting a workstation class card.

Now, if you're a programmer, having access to the workstation level features is fun and interesting. But probably not worth the money in most cases.

Only people who want workstation class features should buy workstation class cards.

Derek Wilson

mattsaccount - Thursday, December 23, 2004 - link

Yes, very interesting. This gives me and lots of others something to point to when someone asks why they shouldn't get the multi-thousand dollar video card if they want top gaming performance :)