AMD Ryzen 5 3600 Review: Why Is This Amazon's Best Selling CPU?

by Dr. Ian Cutress on May 18, 2020 9:00 AM ESTTurbo, Power, and Latency

Turbo

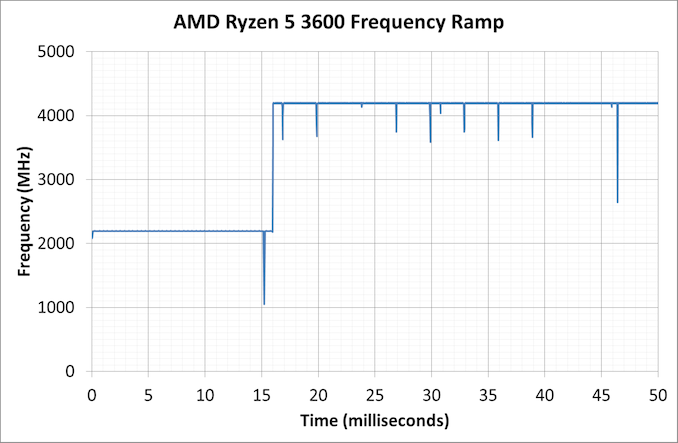

As part of our usual test suite, we run a set of code designed to measure the time taken for the processor to ramp up in frequency. Recently both AMD and Intel are promoting features new to their processors about how quickly they can go from an active idle state into a turbo state – where previously we were talking about significant fractions of a second, we are now down to milliseconds or individual frames. Managing how quickly the processor fires up to a turbo frequency is also down to the silicon design, with sufficient frequency domains needing to be initialized up without causing any localised voltage or power issues. Part of this is also down to the OEM implantation of how the system responds to requests for high performance.

Our Ryzen 5 3600 jumped up from a 2.2 GHz high-performance idle all the way to 4.2 GHz in 16 milliseconds, which coincides exactly with a single frame on a 60 Hz display. This is right about where machines need to be in order to remain effective for a good user experience, assuming the rest of the system is up to scratch.

Power

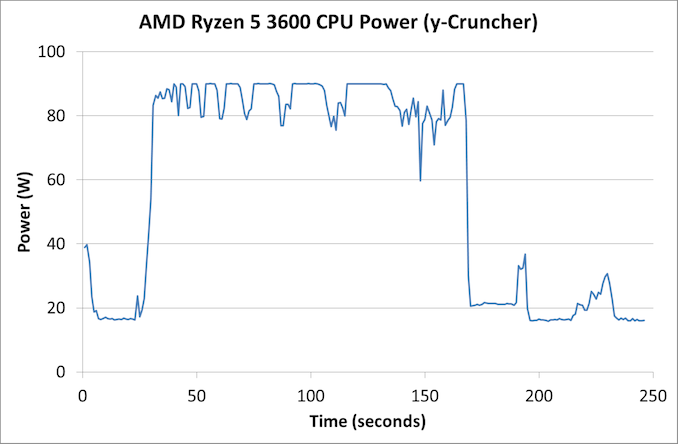

With the Ryzen 5 3600, AMD lists the official TDP of the processor as 65 W. AMD also runs a feature called Package Power Tracking, or PPT, which allows the processor to turbo where possible to a new power value – for 65 W processors that new value is 88 W. This takes into account the power delivery capabilities of the motherboard, as well as the thermal environment. The processor can then manage exactly what frequency to give to the system in 25 MHz increments.

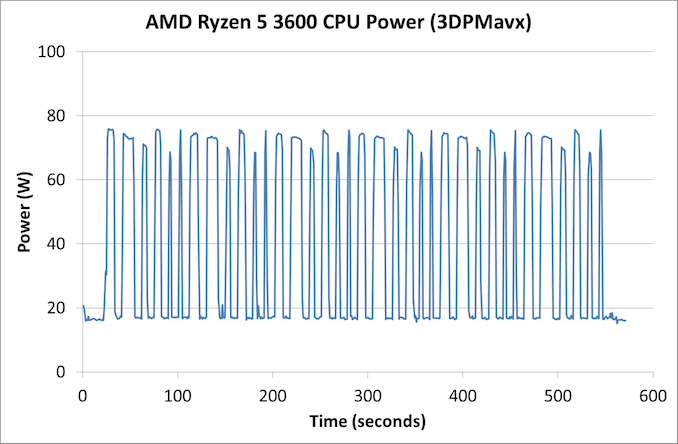

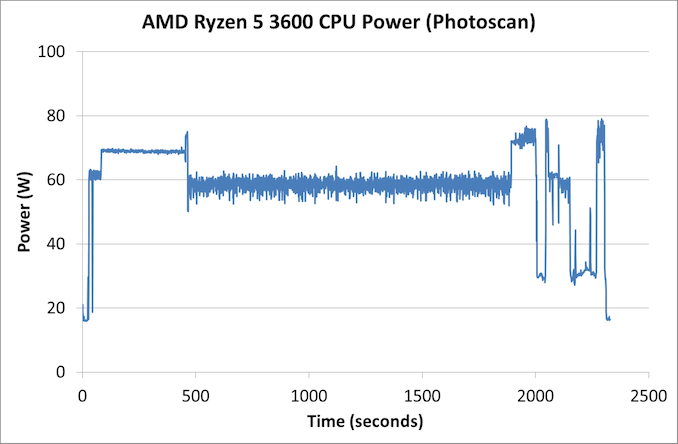

As part of my new test suite, we have a CPU power wrapper across several benchmarks to see the power response for a variety of different workloads.

For an AVX workload, y-Cruncher is somewhat periodic in its power use due to the way the calculation runs, but we see an almost constant 90 W peak power consumption through the whole test. The all-core turbo frequency here was in the 3875-3925 MHz range.

Our 3DPMavx test implements the highest version of AVX it can, for a series of six 10 second on, 10 second off tests, which then repeats. In this case we don’t see the processor going above 75 W in the whole process.

Photoscan is our more ‘regular’ test here, comprising of four stages each changing between single thread, multithread, and variable thread. We see peaks here up to 80 W, but the big variable threaded scenario bounces more around the 60 W mark for over 1000 seconds.

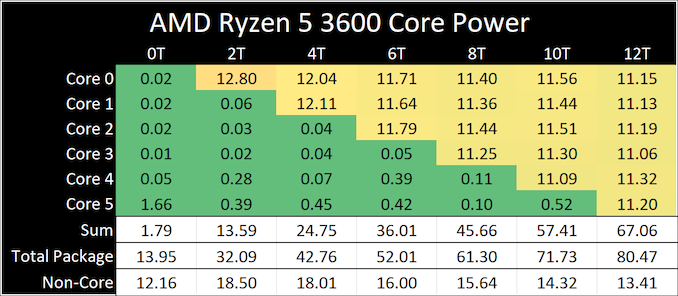

On the per-core power side, using our ray tracing power load, we see a small range of peak power values

When one thread is active, it sits at 12.8 W, but as we ramp up the cores, we get to 11.2 W per core. The non-core part of the processor, such as the IO chip, the DRAM channels and the PCIe lanes, even at idle still consume around 12-18 W in the system.

Latency

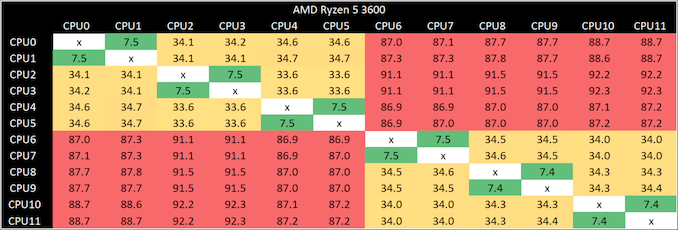

Our latency test is a simple core-to-core ping test, to detect any irregularities in the core design.

The results here are as expected.

- 7.5 nanoseconds for threads within a core

- 34 nanoseconds for cores within a CCX

- 87-91 nanoseconds between cores in different CCXes

114 Comments

View All Comments

vortmax2 - Monday, May 18, 2020 - link

Anyone know why the 3300X is at the top of the Digicortex 1.20 bench?gouthamravee - Monday, May 18, 2020 - link

I'm guessing here, but the 3300X has all its cores on a single CCX and if Digicortex is one of those benches that's highly dependent on latency that could explain why the 3300X is at the top of the list here.I checked the previous 3300x article and it seems to be the same story there.

wolfesteinabhi - Monday, May 18, 2020 - link

Thanks for a great Article Ian and AT.the main problem with mid/lower range CPU (review) like this Ryzen 3600/X and even i5/i3's is that their reviews are almost always focused on "Gaming" (for some reason everything budget oriented is just gaming) ... no one talks about AI workloads or MATLABs, Tensorflows,etc many people and developers dont want to shell out monies for 2080Ti and Ryzen 9 3950X or even TR's .... they have to make do with lower end or say "reasonable" CPU's ... and products like these Ryzen 5 that makes sensible choice in this segment ... a developer/learner on budget.

a lot of people would appreciate if there are some more pages dedicated to such development workflows (AI,Tensor,compile, etc) even for such mid range CPU's.

DanNeely - Monday, May 18, 2020 - link

Ian periodically tweets requests for scriptable benchmarks for those categories and for anyone with connections at commercial vendors in those spaces who can provide evaluation licenses for commercial products. He's gotten minimal uptake on the former and doesn't have time to learn enough about $industry to create a reasonable benchmark from scratch using their FOSS tools. On the commercial side, the various engineering software companies don't care about reviews from sites like this one and their PR contacts can't/won't give out licenses.webdoctors - Monday, May 18, 2020 - link

Because office tasks don't require any computation, and gaming is what's most mainstream that actually requires computation.Scientific stuff like MATLAB, Folding@Home needs computation but if that's useful you'd just buy the higher end parts. Price diff between 3600x and 3700x (6 vs 8core) is $100, $200 vs $300 at retail prices. For someone working, $100 is nothing for improving your commercial or academic output. These are parts you use for 5+ years.

I agree a TR doesnt make sense if you can get the consumer version like a 3800x much cheaper.

Impetuous - Monday, May 18, 2020 - link

Logged in to second this. I think a lot of students and professionals like me who do research on-the-side (and are on pretty tight Grants/allowances) would appreciate a MATLAB benchmark. This looks like a great option for a grad student workstation!brucethemoose - Monday, May 18, 2020 - link

I think one MKL TF benchmark is enough, as you'd have to be crazy to buy a 3600 over a cheap GPU for AI training training. If money is that tight, you're probably not buying a new system and/or using Google Colab.+1 for more compilation benchmarking. I'd like a Python benchmark too, if theres any demand for such a thing.

PeachNCream - Monday, May 18, 2020 - link

A lot of people don't have money to throw away at hardware, moreso now than ever before so we are going to make older equipment work for longer or buy less compute at a lower price. It's important to get hardware out of its comfort zone because these general purpose processors will be used in all sorts of ways beyond a narrow set of games and unzipping a huge archive file. After all, if you want to play games, buying as much GPU as you can afford and then feeding it enough power solves the problem for the most part. That answer has been the case for years so we really don't need more text and time spent on telling us that. Say it once for each new generation and then get to reviewing hardware more relevant to how people actually use their computers.jabber - Tuesday, May 19, 2020 - link

Plus most of us don't upgrade hardware as much as we used to. back in the day (single core days) I was upgrading my CPU every 6-8 months. Each upgrade pushed the graphics from 28FPS to 32FPS to 36FPS which made a difference. Now with modest setups pushing past 60FPS...why bother. I upgrade my CPU every 6 years or so now.wolfesteinabhi - Tuesday, May 19, 2020 - link

as i said in one of the replies below... maybe TF is not a good example ..but its not like it will be purely on a CPU for TF work, but some benchmark around it ...and similar other work/development related tasks.Most of us have to depend on these gaming only benchmarks to guesstimate how good/bad a cpu will be for dev work. maybe a fewer core cpu might have been better with extra cache and extra clocks or vice versa ... but almost no reviews tell that kind of story for mid/low range CPU's.... having said that..i dont expect that kind of analysis from dual cores and such CPU ..but higherup there are a lot of CPU that can be made to do a lot of good job even beyond gaming (even if it needs to pair up with some GPU)