Gigabyte Dual GPU: nForce4, Intel, and the 3D1 Single Card SLI Tested

by Derek Wilson on January 6, 2005 4:12 PM EST- Posted in

- GPUs

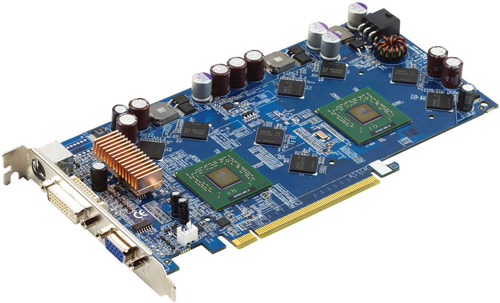

The Hardware

First up on the chopping block is the Gigabyte 3D1. Gigabyte is touting this board as being a 256bit 256MB card, but this is only really half the story. Each of the 6600GT GPUs is only privy to half of the bandwidth and RAM on the card. The 3D1 is obviously a 256MB card, since it physically has 256MB of RAM on board. But, due to the nature of SLI, two seperate 128bit/128MB busses do not translate to the same power as a 256bit/256MB setup does on a single GPU card. The reason for this is that a lot of duplicate data needs to be stored in local memory off of each GPU. Also, when AA/AF are applied to the scene, we see cases where both GPUs end up becoming memory bandwidth limited. Honestly, it would be much more efficient (and costly) to design a shared memory system into which both GPUs could draw if NVIDIA knew someone was going to drop the chips on one PCB. Current NVIDIA SLI technology really is designed to connect graphics cards together at the board level rather than at the chip level, and that inherently makes the 3D1 design a bit of a kludge.

Of course, without the innovators, we would never see great products. Hopefully, Gigabyte will inspire NVIDIA to take single-board multi-chip designs into account when building future multi-GPU options into silicon. Even if we do see a "good" shared memory design at some point, the complexity added would be orders of magnitude beyond what this generation of SLI offers. We would certainly not expect to see anything more than a simple massage of the current feature set in NV5x.

The 3D1 does ship with a default RAM overclock of 60MHz (120MHz effective), which will end up boosting memory intensive performance a bit. Technically, since this card just places two 6600GT cards physically on a single board and permanently links their SLI interfaces, there should be no other performance advantages over other 6600GT SLI configurations.

One thing that the 3D1 loses over other SLI solutions is the ability to turn off SLI and run two cards with more than two monitors on the output. Personally, I really enjoy running three desktops and working on the center one. It just exudes a sense of productivity that far exceeds single and dual monitor configurations.

The only motherboard that can run the 3D1 is the GA-K8NXP-SLI. These products will be shipping together as a bundle within the month, and will cost about as much as buying a motherboard and two 6600GT cards. As usual, Gigabyte has managed to pack just about everything but the kitchen sink into this board. The two physical x16 PCIe connectors are wired up with x16 and x8 electrical connections. It's an overclocker-friendly setup (though, overclocking is beyond the scope of this article), and easy to set up and get running. We will have a full review of the board coming along soon.

As it pertains to the 3D1, when connected to the GA-K8NXP-SLI, the x16 PCIe slot is broken into 2 x8 connections that are dedicated to each GPU. This requires the motherboards SLI card be flipped to single setting rather than SLI.

Under the Intel solution, Gigabyte is hoping that NVIDIA will decide to release full-featured multi-GPU drivers that don't require SLI motherboard support. Their GA-8AENXP Dual Graphic is a very well done 925XE board that parallels their AMD solution. On this board, Gigabyte went with x16 and x4 PCI Express graphics connections. SLI performance is, unfortunately, not where we would expect it to be. It's hard to tell exactly from where these limitations are coming, given the state of drivers for Intel lagging the AMD platform. One interesting thing to note is that whenever we had more than one graphics card plugged into the board, the card in the x4 PCIe (the bottom PCI Express slot on the motherboard) took the master role. There was no BIOS option to select which PCI Express slot to boot first as there was in the AMD board. Hopefully, this will be updated in a BIOS revision. We don't think that this explains the SLI performance (as we've seen other Intel boards perform at less than optimal levels), but having the SLI master in a x4 PCIe slot probably isn't going to help.

The revision on the GA-8AENXP Dual Graphic that we have is 0.1, so there is definitely some room for improvement.

But let's take a look at the numbers and see what the tests have to say.

43 Comments

View All Comments

beany323 - Tuesday, January 11, 2005 - link

Wow, I have been planning on getting a new computer (from Cyberpower) and was like, ok get the PCIe...then i see the SLI....so i read up on it..now i am confused! I bought a hp 2 years ago, and now i would be lucky if i could use it to play WoW (i tried already, to old a video card, anyways) i am willing to spend some money but dont want to get stuck with a big paperweight. I thought the idea with 2 cards sounded good (i was even thinking might as well get 2 ultra's :) ) but not sure now... anymore thoughts? Just from reading this thread, you guys know WAY more then i could ever sit down and read...so thanks in advance!!beany323

endrebjorsvik - Tuesday, January 11, 2005 - link

Would it be possible to use two 3D1-cards in non-SLi-mode and still use all the GPU's? Then you would be alble to get serious performance in triple-/quad-monitor-systems.sxr7171 - Sunday, January 9, 2005 - link

I thought the whole point of SLI was to offer an upgrade path that allowed consumers to stagger their spending by upgrading their performance in two stages. Buying a single card with two GPUs that costs the same and performs worse than a single 6800 ultra is quite pointless.There are absolutely no situations where a dual 6600GT card outperforms a single 6800 ultra card. There are no synergies in having two GPUs on the same card and no incentive to buy such a card.

PrinceGaz - Saturday, January 8, 2005 - link

Just in cast that sounded abit harsh, all I will say is would you perosnally swap a 6800GT based card for that 3D1 multi-core card?PrinceGaz - Saturday, January 8, 2005 - link

I'm sorry but I still see no sensible reason whatsoever to buy a dual-core 6600GT card when a 6800GT could be bought for a similar price. I can see lots of stupid reasons for buying the dual-core 6600GT, but nothing whatsoever people have said in the comments or in the review give a good reason for choosing it in preference to a 6800GT. I'm afraid I'm going to have to jump on a bit of a bandwagon and wonder if everything reviewed here is always considered good generally, as I can't remember the last time something got a well deserved slating. Which this 3D1 should have been given because of the hardware compatibility issues, and also software compatibility issues with games that aren't SLI recognised, or the fact it will work at half speed (one core) with games nVidia hasn't bothered looking at.When you review stuff you see good and bad, but all we ever read about here are products which are great, or products which will be very good after they fix this and that. The only review recently which had any constructive criticism was that of normal 6600GT's where you looked at the fan-mounting method. Maybe you only get to see the very best products because that is all the manufacturers will send you (which explains why there was no mid-range/budget memory round-up as they don't want to send a 512MB ValueWhatnot stick that will perform worse than everything else).

What I'd have said about the 3D1 after looking at the performance is that they shouldn't have bothered with a 6600GT dual-core, but instead have done an NV41 based dual-core card. You'd be a fool to buy the 3D1 the way it as at the moment.

Review quotes like "Until then, bundling the GA-K8NXP-SLI motherboard and 3D1 is a very good solution for Gigabyte Those who want to upgrade to PCI Express and a multi-GPU solution immediately have a viable option here. They get the motherboard needed to run an SLI system and two GPUs in one package with less hassle." make me wonder if someone paid you to say that.

AtaStrumf - Saturday, January 8, 2005 - link

*loose some performance* - compared to a more powerful but equally priced single chip solutionAtaStrumf - Saturday, January 8, 2005 - link

johnsonx the reason for buying one card with 2 6600 GTs or two separate 6600GTs instead of one 6800 GT may be in the fact that you get very close to 6800 *Ultra* performance, provided you don't use AA or Aniso in newer games. Granted OC-ing 6800GT will do the same + give you AA/Aniso performance od the same level.I guess the reason to go for SLi is in its ability to provide a cheap upgrade path and not for two GPUs to be put on the same board and save you 0$ + loose some performance. Gigybyte may have missed an important point here. What they should be making is one board 2 x 6800 GTs, since that provides unheard of performance in a single card (something NEW) and/or at least lower the price of 3D1 to make it cheaper than 2 separate cards and of course make it work in non SLi boards. Simply put, give it some tangible extra value over 2 board SLi solution and not the other way around as it is now - only two monitors and such.

MadAd - Saturday, January 8, 2005 - link

Nvidia are looking more and more like 3dfx every day - big boards, sli, late with real releasesId prefer an AIW X900SLI board tho

glennpratt - Saturday, January 8, 2005 - link

johnsonx - I agree, though I have a theory. If your like me and the 10 other computers in your house/immediate family consist of hand me down parts from a couple primary computers, it would be nice to have two decent video cards when you upgrade next. Still stupid...johnsonx - Friday, January 7, 2005 - link

What I find puzzling about this whole SLI thing right now (not this dual-GPU card in particular) is all the people buying an ultra-expensive SLI board and dual 6600GT's to build a new system. I understand buying an SLI board and one 6600GT or 6800GT to allow for future upgrade, and I even understand buying SLI and dual 6800GT's for maximum performance (though it sure seems like overkill and overspending to me).But buying dual 6600GT's on purpose just doesn't make any sense at all. I guess they just want to say they have it? Even though it costs far more and performs the same as a non-SLI board with a 6800GT....

This particular dual-GPU card doesn't make any sense right now either, for all the obvious reasons.

Maybe later....