Does an AMD Chiplet Have a Core Count Limit?

by Dr. Ian Cutress on September 7, 2021 9:00 AM EST

When it was announced that AMD was set to give a presentation at Hot Chips on its newest Zen 3 microarchitecture, I was expecting the usual fare when a company goes through an already announced platform – a series of slides that we had seen before. In the Zen 3 presentation this was largely the case, except for one snippet of information that had not been disclosed before. This bit of info is quite important for considering AMD’s growth strategy.

In order to explain why this information was important, we have to talk about the different ways to connect two elements (like CPU cores, or full CPUs, or even GPUs) together.

Connectivity: Ring, Mesh, Crossbar, All-to-All

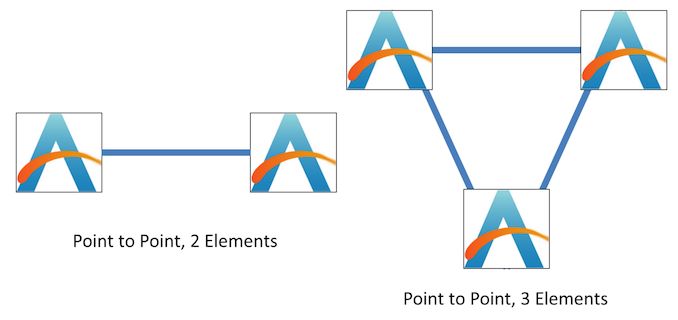

With two processing elements, the easiest way to connect them is by a direct connection. With three elements, similarly, each part can be directly connected to the other.

When we move up to four elements, options become available. The elements can either be similarly arranged in an all-to-all configuration, or into a ring.

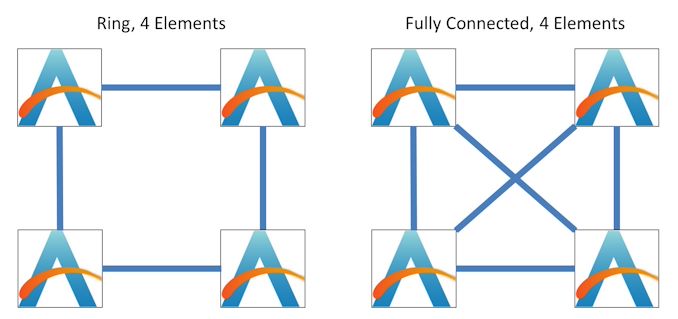

The difference between the two comes down to latency, bandwidth, and power.

In the fully connected situation on the right, every element has a direct connection to each other, allowing for full connectivity bandwidth and the lowest latency. However, this comes with the tradeoff of power, given that each element has to have three connections. If we compare that to the ring, each element only has two connections, fixing the power, however because the average distance to each other element is no longer constant, and we have to pass data around the ring, it can cause variability in latency and bandwidth depending on what else is being sent around the ring.

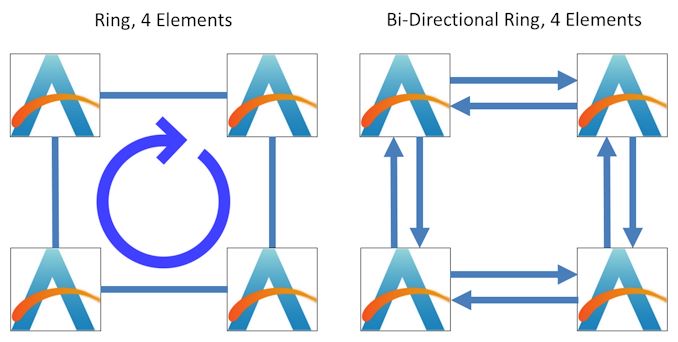

Also with the ring, we have to consider if it can send data in one direction only, or in both directions.

Almost all modern ring designs are bi-directional, allowing for data to flow in either direction. For the rest of this article, we’re assuming all rings are bi-directional. Some of the more modern Intel CPUs have double bi-directional rings, enabling for double bandwidth at the expense of double power, but one ring can be ‘turned off’ to save power in non-bandwidth limited scenarios.

The best way to consider the two four-element designs is through the number of connections and average hops to other elements:

- 4-Element Fully Connected: 3 Connections, 1 hop average

- 4-Element Bi-directional Ring: 2 Connections, 1.3 hop average

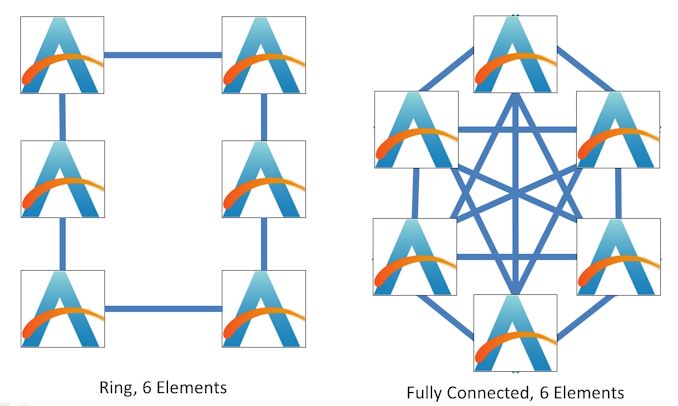

The same thing can occur with six-element configurations:

Here, the balance between bandwidth and power is more extreme. The ring design still relies on two connections per element, whereas a fully connected topology requires five connections per element. The fully connected design however remains at one hop average to access any other element, while the ring is now more complex at 1.8 hops per average access.

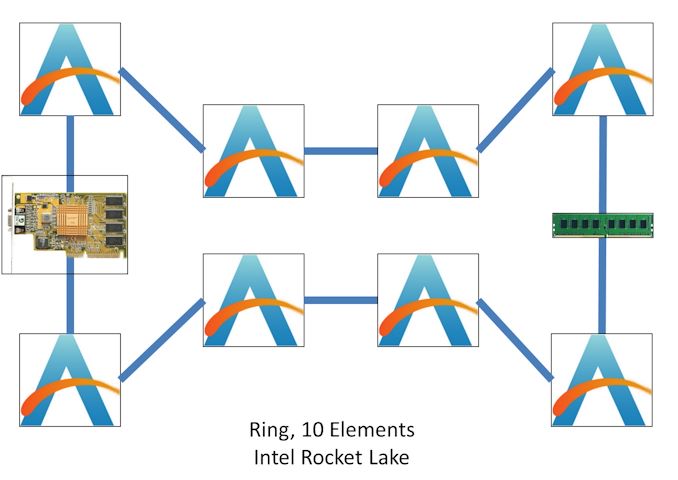

We can expand both somewhat indefinitely, however in modern CPU design, there is a substantial tradeoff in performance if increasing all of your power goes into maintaining those fully connected designs. There’s also one point to note here, we haven’t considered what else might be in the design – for example, modern Intel desktop CPUs, known for having rings, will also place the DRAM controllers, IO, and integrated graphics on the ring, so an 8-core design isn’t merely an 8-element ring:

Here’s a simple mockup including the DRAM and integrated graphics. Truth be told, Intel doesn’t tell us everything about what’s connected to the ring, which means it can be difficult to determine where everything is located, however with synthetic tests we can see the average latency of a ring hop and try and go from there.

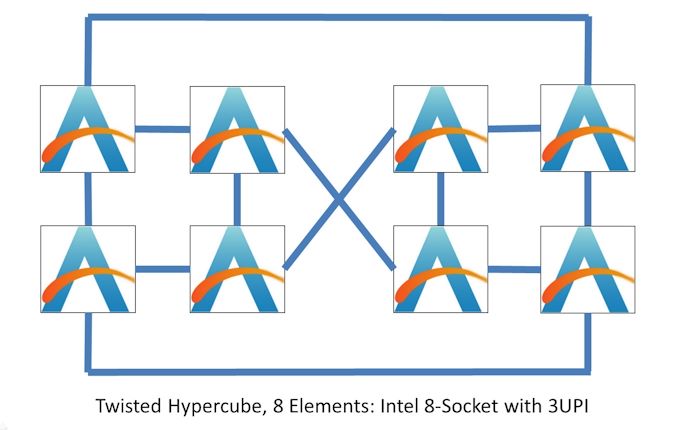

Intel has actually developed a way of connecting 8 elements together in not-a-ring but also not-fully-connected by giving each element the opportunity to have three connections. Again, the idea here is trading off some power for improved latency and bandwidth:

This is akin to taking the eight corners of a cube, creating rings on both sides, then implementing alternate connection strategies on the orthogonal faces. What it means is that each element is directly connected to three other elements, and everything else is two hops away:

- Twisted Hypercube, 8 Elements: 3 Connections, 1.57 average hops

In next-generation Sapphire Rapids, Intel is giving each CPU 4 connections, for 1.43 average hops.

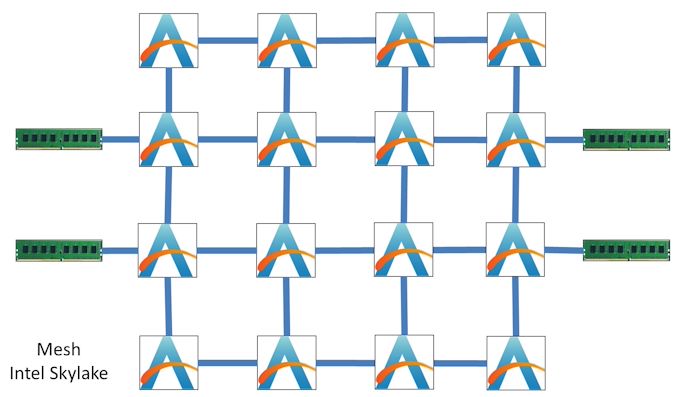

Going above 10 elements in a ring, at least in modern core architectures, seems to be a bit of a problem due to the increased latency. You end up putting increasing stress on the ring as more cores usually means more bandwidth is needed to keep them all fed with data. Intel and other big-core single-chip AI companies have addressed this by implementing a two-dimensional mesh.

The mesh design trades off some additional per-element connections for better latency and connectivity. The average latency still varies, and in the event of data flow-heavy situations, data can take multiple routes to get to where it needs to go.

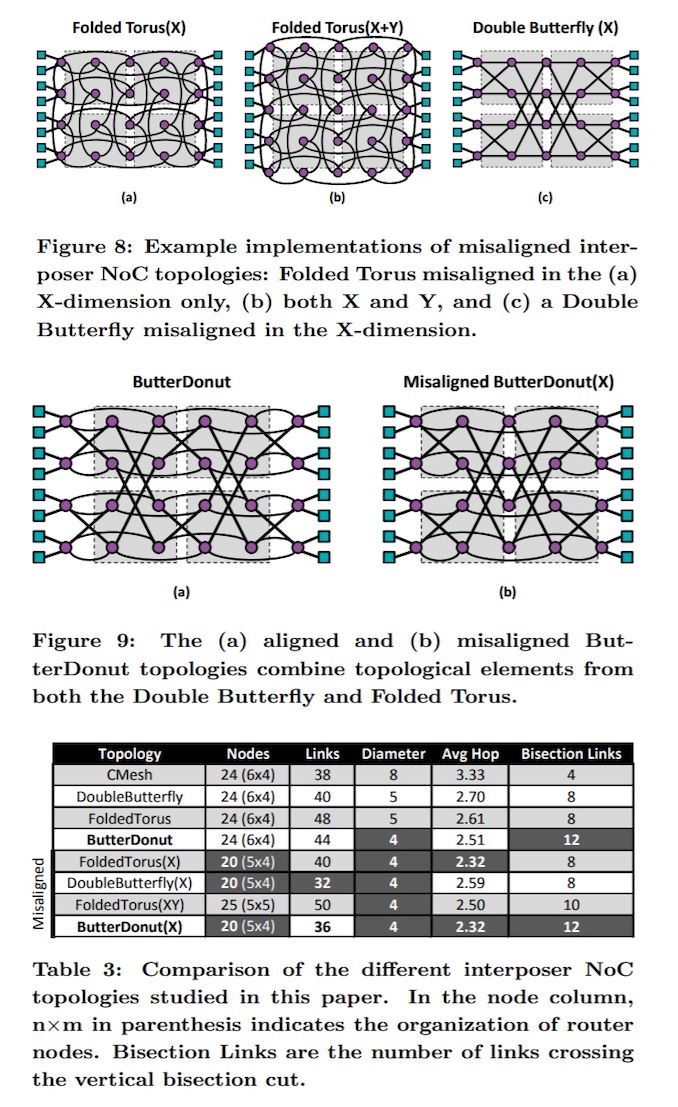

A 2D mesh is the simplest layout – each element next door is an x/y unit away. It revolves around each element being in a plane with no overlap of connectivity. There has been a lot of work done on topologies that take advantage of a little bit of 3D, which is where we might go to when chip-on-chip stacking technology is widely implemented. Here is an example paper of why a ButterDonut might be a good idea if mesh networks were implemented at the interposer level, as it minimizes hop links.

The other alternative is a Crossbar. The most basic view of a Crossbar is that it implements an effective all-to-all fully-connected topology for only a single connection. There are multiple types of crossbar, again depending on bandwidth, latency, and power requirements. A crossbar isn’t magical, what it really does is kick the connectivity problem one step down the road.

At this point of the article, we haven’t spoken how the elements are connected together. Inside a chip that usually means in silicon, however when we’re talking about connecting chips together, that might be through an interposer, or the PCB, which is more limiting in terms of how many high-speed connections it can hold and how many can crossover a given point. Often a physical external crossbar is needed to help simplify on-package connections, for example NVIDIA’s allows eight Tesla GPUs to connect in an all-to-all fashion by going through an NVSwitch, which is effectively a crossbar.

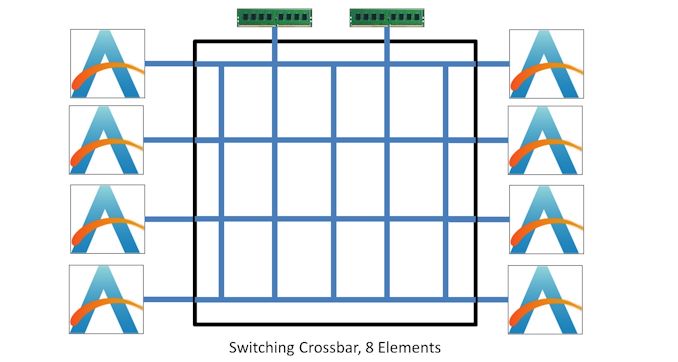

In this instance, here’s a diagram of a Switching Crossbar, which acts as a matrix or an internal mesh that manages where data needs to go.

In these sorts of environments, even though there is ‘only’ one connection to the crossbar compared to other configurations that might have two or three connections per element, consider that bandwidth might be double/triple to the crossbar than in a direct connection. This still means each element has more than one effective connection, and enjoys multiples of bandwidth if needed.

So Why Is AMD Limited?

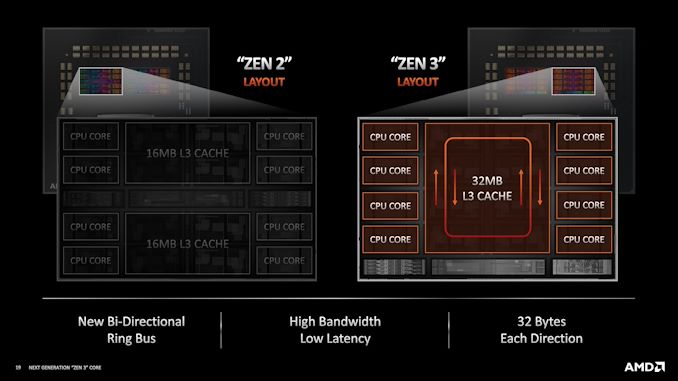

The reason for going through all of these explanations about connectivity is that when AMD moved from Zen 2 to Zen 3, it increased the number of cores inside a CCX (core complex). In Zen 2, a chiplet of eight cores had two four-core CCXes, and each of them connected to the main IO die, but with Zen 3, a single CCX grew to eight cores, and remained eight cores per chiplet.

When it was four cores per CCX, it was very easy to imagine (and test for) a fully-connected four-core topology. It isn’t that much extra to expect each core was connected to the other. Now with eight cores per CCX, since launch, AMD has been extremely coy about telling anyone publicly how those cores are connected together. When asked at launch if the cores in a Zen 3 eight-core CCX were fully connected, AMD’s general attitude was ‘Not quite, but close enough’. This would mean something between a ring and something between an all-to-all design, but more verging on the latter.

In our testing, we saw a similar CCX latency profile with eight cores as we had seen with four cores. This would essentially confirm AMD’s comments - we didn’t see any indication that AMD was using a ring. However, at Hot Chips, AMD’s Mark Evers (Chief Architect, Zen 3) presented this slide:

It was a bit of a shock to see it stated so clearly, given AMD’s apprehension in previous discussions about the topology. It was also a shock to have something new in this presentation, as pretty much everything else had been presented at previous events. However there are repercussions for this.

Going Beyond 8 Cores Per CCX

As AMD has been slowly increasing core counts on its processors, it has had two ways to do so: more chiplets or more cores per chiplet. With future generations of AMD processors expected to have more cores, it has to come from one of these two options. Both are viable, however it’s the more cores per chiplet option to consider.

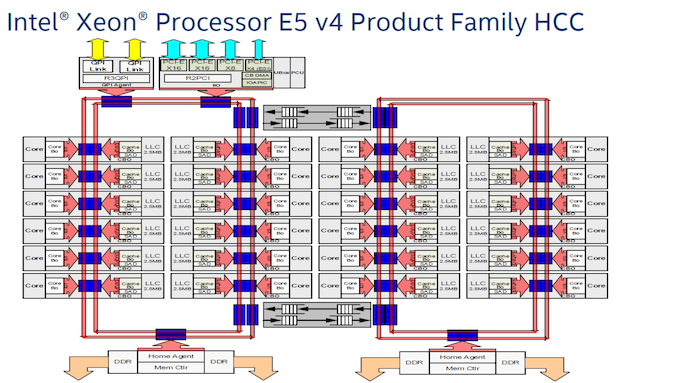

We’ve spoken in this article about how rings trade off power and connections per element for latency and bandwidth, and how there can be an appreciable limit to how many elements or cores that can be put into a ring before the ring is the limiting factor. Intel, for example, has processors with 10 cores that use double bandwidth rings, but the most number of cores it has ever put into a ring is 12, with the Broadwell Server line of processors that ended up using dual 12-core rings. Note that each ring has more than 12 ring stops, because of extra functionality.

Each ring here has 12 ring stops for cores, two for ring-to-ring connectivity, one for DRAM, and the left ring has two extra stops for chip-to-chip and PCIe. That ring on the right has effectively 17 ring stops / elements attached to it. After this, Intel went to meshes.

Apply this scenario to AMD: if AMD were to grow the number of cores per CCX from eight in Zen 3, the most obvious answers are to either 12 cores or 16 cores. On a ring, neither of these two sound that appetizing.

AMD’s alternative to increasing cores on a chiplet is to simply double the number of CCXes. As with Zen 2, which had two lots of four cores, a future product could instead have two lots of eight cores, which would be an easy jump to a sixteen-core chiplet.

It is worth noting that AMD’s next-generation server platform, Genoa, is expected to have more than the 64 cores that AMD’s current generation has. Those 64 cores are eight chiplets of eight cores each, with one eight-core CCX per chiplet. Leaks have suggested that Genoa simply adds more chiplets, however that strategy isn’t infinitely scalable.

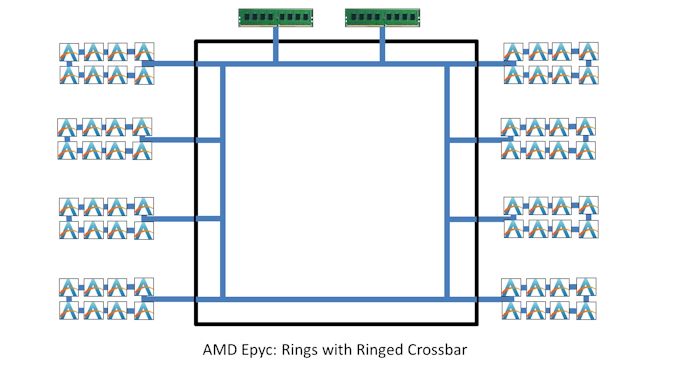

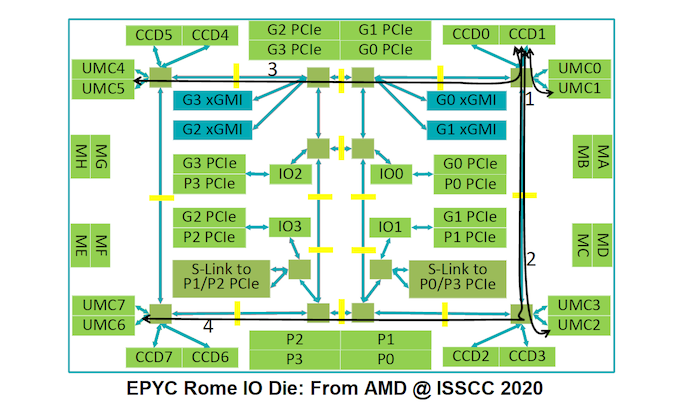

Moreover to all of this, consider AMD’s IO die in EPYC. It’s effectively a crossbar, right? All the chiplets come together to be connected, however AMD’s IO die is itself a Ring Crossbar design.

What we’ve ended up with from AMD is a ring of rings. In actual fact, the ring is a bit more complex:

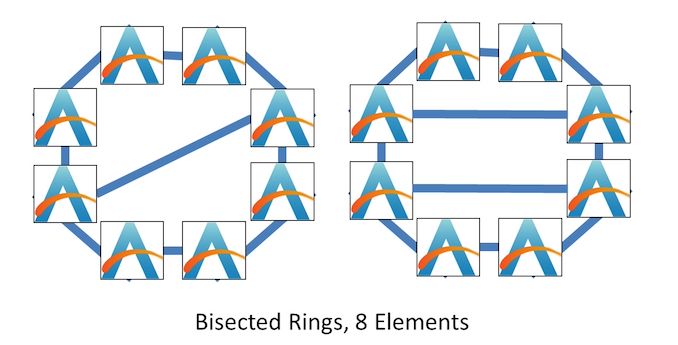

AMD’s IO die is one big outer ring with eight stops on it, and some of the stops have connections across the ring. It could be considered a mesh, or a bisected ring, and it looks something like this:

With a bisected ring, there’s now a non-uniform balance in the number of connections per element and the average latency – some elements have two connections, others have three. However, this is similar to the mesh in the sense that not every element has an identical bandwidth or latency profile. It is also important to note that a bisected ring can have one, two, or more internal connections.

So is AMD's Zen 3 8-Core CCX Really A Ring?

AMD tells us that its eight-core CCX structure is a bidirectional ring. If that’s the case, then AMD is going to struggle to move beyond eight cores per CCX. It could easily double cores per chiplet by simply doubling the number of CCXes, however beyond that the ring needs to change.

In our testing, our results show that while AMD’s core complex is not an all-to-all connection, it also doesn’t match what we would expect from ring latencies. Simply put, it’s got to be more than a ring. AMD has been very coy on the exact details of their CCX interconnect – by providing a slide saying it’s a ring reinforces the fact that it’s not an all-to-all interconnect, but we’re pretty sure it’s some form of a bisected ring, a detail that AMD has decided to leave out of the presentation.

Final Thoughts: Going Beyond Rings

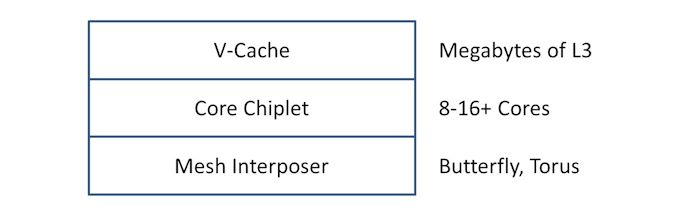

As I have been writing this piece, it has occurred to me what might be in the future for this sort of design. In the x86-world, AMD has pioneered the 2D ‘CPU Chiplet’ without much IO, and AMD is moving forward with its vertical 3D stacking V-Cache technology as announced last year. As part of this article, I spoke about different sorts of mesh interconnect, and the fact that to do something innovative requires an interposer. Well, consider each CPU chiplet with another chiplet below, as an effective single-silicon interposer, solely for core-to-core interconnect.

The interposer could be on a larger process node, e.g. 65nm, very high yielding, and move some of the logic away from the core chiplet, reducing its size or making more room for more innovation. The key here would be the vias required for data and power from the package, but AMD has extensive experience with its GPUs that require interposers.

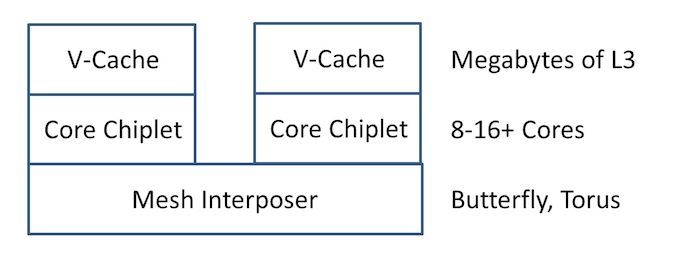

Alternatively, go one stage further – interposers are designed for multiple chiplets. If a 65nm high-yielding interposer is easy enough to make to fit two or three chiplets on, then just put multiple chiplets on there so they can act as one big chiplet with a unified cache between all of them. AMD has also stated that its V-Cache latency only increases with wire length, and so an interposer between two/three/four chiplets on either side of the IO die would not add significant latency to the cache.

The advent of chiplets and tiles means that as semiconductor companies start disaggregating their IP into separate bits of silicon, and packaging technologies get cheaper and higher-yielding, we’re going to see more innovation in areas that are starting to become bottlenecks, such as ring interconnects.

112 Comments

View All Comments

eastcoast_pete - Tuesday, September 7, 2021 - link

So, how three-dimensional do those butter donuts get? Also, how much overhead in both silicon and power usage does managing those many cores in many chiplets add? Since we're using food analogies, where do you think the butter zone for cores per chiplet and chiplet per CPU is, at least currently?Lastly, here a wild thought: Expand something like Alder Lake's Thread Director to actively manage a 3D meshed chiplet-based CPU, especially if it uses big and little cores. When there still was a SUN, their slogan was "the network is the computer"; looks like that's now very much true also for the CPU.

Kevin G - Tuesday, September 7, 2021 - link

Rocket Lake actually has 12 ring stops: the GPU has two stops and the PCIe IO complex has one on its own. (Also why use an ancient AGP graphics card in the graphic?) The largest ring complex Intel has built was for Comet Lake at 14 stops (10 cores, 2 GPU, 1 IO, 1 memory). The speculative theory is that Intel is limited to a total of 16 stops on the ring. As mentioned, legacy Xeons had begun to add separate ring systems to scale upward in core counts but those two never exceeded 14 hops around. The oddity was Westmere-EX which wasn't fully bi-directional and had some really weird latency characteristics.I have a quibble about crossbars: the reason why they're generally avoided is that they become incredibly complex as the number of nodes on them increases. Pretty much every generation of CCX would require its own custom sized crossbar as core count increases. It is common practice to over a design a cross bar and then let it fill up as product lines evolve/die shrinks are possible. I do think we'll see AMD using a crossbar but on the IO die where the number of nodes will not radically change over time.

The interposer can indeed hold a complex topology between dies. However, I would argue that it'd wise to go with a more active interposer using a crossbar instead of a more exotic mesh-like topology. The crossbar would produce heat but it'd be relatively small. Such a cross bar interposed would be a per-product which each main passive interposer already is a per-product design to begin with.

One thing glossed over with AMD's current topologies is how many links between the IO die and the CCD exist? In Rome there were two links between the IO die and each CCD as to match the number of CCX inside the CCD. Presumably with one CCX per CCD in Milan, there would be potential to double the number of CCD/CCX but at the expense of per CCX bandwidth. (This is where the 3D V-Cache comes into play to help counter the bandwidth issue.)

AMD does have another method of increasing the number of cores in a system: increase socket count. It has puzzled my why Rome/Milan do not support a three socket system. Yes it is a bit odd but the bandwidth and links are there to do a solid point-to-point topology. Adding more sockets to a system is also viable for Intel in these large complex servers as they are expected to migrate to optical interconnects using on-package silicon photonics at some point (Intel has demoed proof of concept of this in the past.)

Speaking of the Intel side of things, it boggles my mind that they have been stuck on Sky Lake-SP/Cascade Lake-SP for so long in servers that they simply wouldn't have shattered the design into CPU chiplets + IO chiplets and link them together via EMIB/interposer. Sapphire Rapids isn't even following this as it includes some IO functionality on each die more akin to AMD's Naples design. I fathom we'll see this post Sapphire Rapids but Intel has certainly taken there time and transitioned to a defense posture.

JayNor - Tuesday, September 7, 2021 - link

Intel's recent patent app 20210263880, " DISAGGREGATED DIE WITH INPUT/OUTPUT (I/O) TILES ", surrounds small processor tiles with narrow IO tiles, connected by emib, in a pattern that can scale to a larger extent than the single IO tile.Ian Cutress - Tuesday, September 7, 2021 - link

Note, I can count 17 ring stops on the first Broadwell XCC ringWaltC - Tuesday, September 7, 2021 - link

I think you are comparing Intel's monolithic traditional designs with AMD's state of the art chiplet designs. You seem to be comparing a single AMD CCX to an entire Intel CPU, and trying really, really hard to see this as some sort of negative for AMD. But the fact is, of course, that AMD is not limited to a single CCX in a given CPU--which seems to me the whole point of a chiplet approach being a net positive over a monolithic design. You are also assuming some sort of equity between Intel's tiles and AMD's chiplets, an equity that has yet to be demonstrated. We know all about chiplets as AMD has been shipping them for years--we know nothing about Alder Lake apart from marketing chatter thus far--not even a shipping date. It's almost like Intel is whispering in your ear "Here is where AMD is limited" which you aren't questioning too closely...;) It remains to be seen what Intel will come up with to attempt to compete, and of course AMD is looking at Zen4 & Zen5, which further muddles any competitive picture from Intel at present.You know, of course, that writing articles titled with question marks is bad form grammatically and journalistically. The reason it's disdained by real journalists is because it usually winds up with the article failing to answer the question raised in the title--as is true here. If you can answer the question you wish to pose, then a declarative title, such as, "An AMD chiplet has a core-count limit" or "An AMD chiplet has no core-count limit" would be much better. And of course the article would go ahead and present the limit--or present evidence that the core count need not be limited. You are not alone in writing articles with question marks these days, especially when the question posed in the title isn't answered. (So, I'm not picking on you in particular...;))

whatthe123 - Tuesday, September 7, 2021 - link

scaling up through multiple CCXs is quite literally in the article, and it wouldn't make much sense to do after spending all their time designing a unified bus. not to mention their current design is the first in years that handily beats intel's designs in all performance metrics, so why intentionally regress just to get more cores?Ian Cutress - Tuesday, September 7, 2021 - link

You're clearly too biased to make a coherent comment. Assuming you even read the article from top to bottom. Betteridge's Law doesn't really apply any more; it's a feature of an older eraSpunjji - Friday, September 10, 2021 - link

Really weird to see the number of people leaving comments dedicated to reading this in the worst spirit possible. Your criticism of the headline is especially asinine: your suggestions both involve more bias, and the article answered its posed question entirely to my satisfaction (there are limits, and there are ways for them to address those limits).boozed - Wednesday, September 8, 2021 - link

Misaligned ButterDonut.Hixbot - Wednesday, September 8, 2021 - link

Sounds tasty