Intel Discloses Multi-Generation Xeon Scalable Roadmap: New E-Core Only Xeons in 2024

by Dr. Ian Cutress on February 17, 2022 5:30 PM EST

It’s no secret that Intel’s enterprise processor platform has been stretched in recent generations. Compared to the competition, Intel is chasing its multi-die strategy while relying on a manufacturing platform that hasn’t offered the best in the market. That being said, Intel is quoting more shipments of its latest Xeon products in December than AMD shipped in all of 2021, and the company is launching the next generation Sapphire Rapids Xeon Scalable platform later in 2022. Beyond Sapphire Rapids has been somewhat under the hood, with minor leaks here and there, but today Intel is lifting the lid on that roadmap.

State of Play Today

Currently in the market is Intel’s Ice Lake 3rd Generation Xeon Scalable platform, built on Intel’s 10nm process node with up to 40 Sunny Cove cores. The die is large, around 660 mm2, and in our benchmarks we saw a sizeable generational uplift in performance compared to the 2nd Generation Xeon offering. The response to Ice Lake Xeon has been mixed, given the competition in the market, but Intel has forged ahead by leveraging a more complete platform coupled with FPGAs, memory, storage, networking, and its unique accelerator offerings. Datacenter revenues, depending on the quarter you look at, are either up or down based on how customers are digesting their current processor inventories (as stated by CEO Pat Gelsinger).

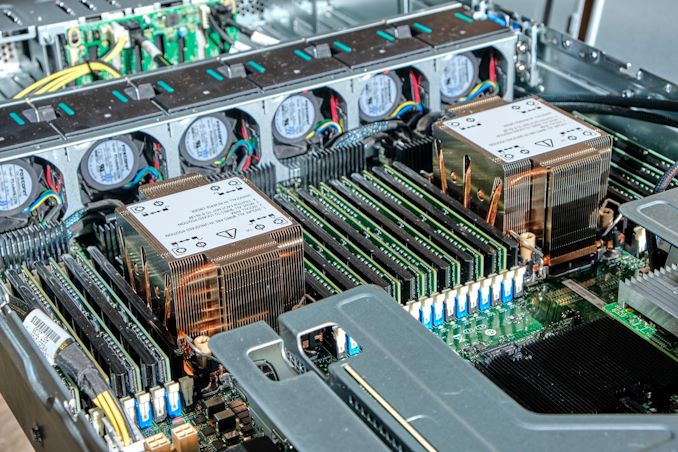

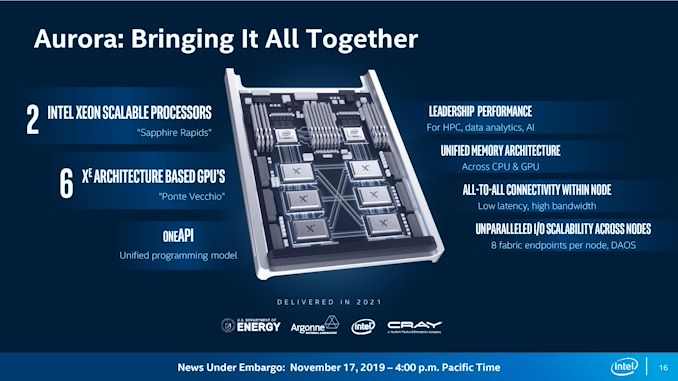

That being said, Intel has put a large amount of effort into discussing its 4th Generation Xeon Scalable platform, Sapphire Rapids. For example, we already know that it will be using >1600 mm2 of silicon for the highest core count solutions, with four tiles connected with Intel’s embedded bridge technology. The chip will have eight 64-bit memory channels of DDR5, support for PCIe 5.0, as well as most of the CXL 1.1 specification. New matrix extensions also come into play, along with data streaming accelerators, quick assist technology, all built on the latest P-core designs currently present in the Alder Lake desktop platform, albeit optimized for datacenter use (which typically means AVX512 support and bigger caches). We already know that versions of Sapphire Rapids will be available with HBM memory, and the first customer for those chips will be the Aurora supercomputer at Argonne National Labs, coupled with the new Ponte Vecchio high-performance compute accelerator.

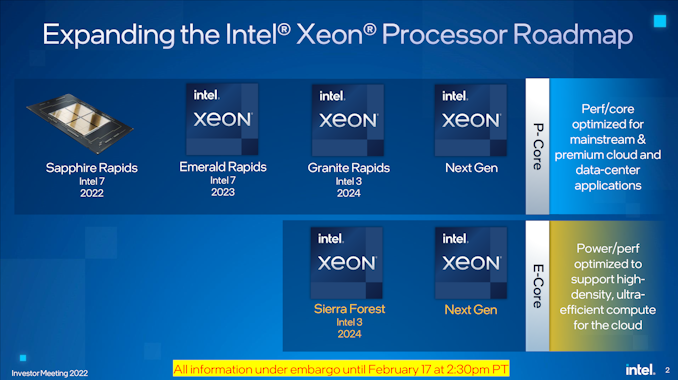

The launch of Sapphire Rapids is significantly later than originally envisioned several years ago, but we expect to see the hardware widely available during 2022, built on Intel 7 process node technology.

Next Generation Xeon Scalable

Looking beyond Sapphire Rapids, Intel is finally putting materials into the public to showcase what is coming up on the roadmap. After Sapphire Rapids, we will have a platform compatible Emerald Rapids Xeon Scalable product, also built on Intel 7, in 2023. Given the naming conventions, Emerald Rapids is likely to be the 5th Generation.

Emerald Rapids (EMR), as with some other platform updates, is expected to capture the low hanging fruit from the Sapphire Rapids design to improve performance, as well as updates from the manufacturing. With platform compatibility, it means Emerald will have the same support when it comes to PCIe lanes, CPU-to-CPU connectivity, DRAM, CXL, and other IO features. We’re likely to see updated accelerators too. Exactly what the silicon will look like however is still an unknown. As we’re still new in Intel’s tiled product portfolio, there’s a good chance it will be similar to Sapphire Rapids, but it could equally be something new, such as what Intel has planned for the generation after.

After Emerald Rapids is where Intel’s roadmap takes on a new highway. We’re going to see a diversification in Intel’s strategy on a number of levels.

Starting at the top is Granite Rapids (GNR), built entirely of Intel’s performance cores, on an Intel 3 process node for launch in 2024. Previously Granite Rapids had been on roadmaps as an Intel 4 node product, however, Intel has stated to us that the progression of the technology as well as the timeline of where it will come into play makes it better to put Granite on that Intel 3 node. Intel 3 is meant to be Intel’s second-generation EUV node after Intel 4, and we expect the design rules to be very similar between the two, so it’s not that much of a jump from one to the other we suspect.

Granite Rapids will be a tiled architecture, just as before, but it will also feature a bifurcated strategy in its tiles: it will have separate IO tiles and separate core tiles, rather than a unified design like Sapphire Rapids. Intel hasn’t disclosed how they will be connected, but the idea here is that the IO tile(s) can contain all the memory channels, PCIe lanes, and other functionality while the core tiles can be focused purely on performance. Yes, it sounds like what Intel’s competition is doing today, but ultimately it’s the right thing to do.

Granite Rapids will share a platform with Intel’s new product line, which starts with Sierra Forest (SRF) which is also on Intel 3. This new product line will be built from datacenter optimized E-cores, which we’re familiar with from Intel’s current Alder Lake consumer portfolio. The E-cores in Sierra Forest will be a future generation than the Gracemont E-cores we have today, but the idea here is to provide a product that focuses more on core density rather than outright core performance. This allows them to run at lower voltages and parallelize, assuming the memory bandwidth and interconnect can keep up.

Sierra Forest will be using the same IO die as Granite Rapids. The two will share a platform – we assume in this instance this means they will be socket compatible – so we expect to see the same DDR and PCIe configurations for both. If Intel’s numbering scheme continues, GNR and SRF will be Xeon Scalable 6th Generation products. Intel stated to us in our briefing that the product portfolio currently offered by Ice Lake Xeon products will be covered and extended by a mix of GNR and SRF Xeons based on customer requirements. Both GNR and SRF are expected to have full global availability when launched.

The E-core Sierra Forest focused on core density will end up being compared to AMD’s equivalent, which for Zen4c will be called Bergamo – AMD might have a Zen5 equivalent when SRF comes to market.

I asked Intel whether the move to GNR+SRF on one unified platform means the generation after will be a unique platform, or whether it will retain the two-generation retention that customers like. I was told that it would be ideal to maintain platform compatibility across the generations, although as these are planned out, it depends on timing and where new technologies need to be integrated. The earliest industry estimates (beyond CPU) for PCIe 6.0 are in the 2026 timeframe, and DDR6 is more like 2029, so unless there are more memory channels to add it’s likely we’re going to see parity between 6th and 7th Gen Xeon.

My other question to Intel was about Hybrid CPU designs – if Intel was now going to make P-core tiles and E-core tiles, what’s stopping a combined product with both? Intel stated that their customers prefer uni-core designs in this market as the needs from customer to customer differ. If one customer prefers an 80/20 split on P-cores to E-cores, there’s another customer that prefers a 20/80 split. Having a wide array of products for each different ratio doesn’t make sense, and customers already investigating this are finding out that the software works better with a homogeneous arrangement, instead split at the system level, rather than the socket level. So we’re not likely to see hybrid Xeons any time soon. (Ian: Which is a good thing.)

I did ask about the unified IO die - giving the same P-core only and E-core only Xeons the same number of memory channels and I/O lanes might not be optimal for either scenario. Intel didn’t really have a good answer here, aside from the fact that building them both into the same platform helped customers synergize non-returnable development costs across both CPUs, regardless of the one they used. I didn’t ask at the time, but we could see the door open to more Xeon-D-like scenarios with different IO configurations for smaller deployments, but we’re talking products that are 2-3+ years away at this point.

| Xeon Scalable Generations | ||||||

| Date | AnandTech | Codename | Abbr. | Max Cores |

Node | Socket |

| Q3 2017 | 1st | Skylake | SKL | 28 | 14nm | LGA 3647 |

| Q2 2019 | 2nd | Cascade Lake | CXL | 28 | 14nm | LGA 3647 |

| Q2 2020 | 3rd | Cooper Lake | CPL | 28 | 14nm | LGA 4189 |

| Q2 2021 | Ice Lake | ICL | 40 | 10nm | LGA 4189 | |

| 2022 | 4th | Sapphire Rapids | SPR | * | Intel 7 | LGA 4677 |

| 2023 | 5th | Emerald Rapids | EMR | ? | Intel 7 | ** |

| 2024 | 6th | Granite Rapids | GNR | ? | Intel 3 | ? |

| Sierra Forest | SRF | ? | Intel 3 | |||

| >2024 | 7th | Next-Gen P | ? | ? | ? | ? |

| Next-Gen E | ||||||

| * Estimate is 56 cores ** Estimate is LGA4677 |

||||||

For both Granite Rapids and Sierra Forest, Intel is already working with key ‘definition customers’ for microarchitecture and platform development, testing, and deployment. More details to come, especially as we move through Sapphire and Emerald Rapids during this year and next.

144 Comments

View All Comments

Kangal - Friday, February 18, 2022 - link

Hybrid Processing doesn't make much/any sense for Servers, Desktops, and even Office PCs; all devices that are hooked to the wall.It does make sense for portable computing. Where you're trying to find a balance between performance and energy usage.

Where we have good benefit for large and thick laptops, we instead see massive benefits on small and thin phones.

So hindsight 20/20, but Intel should have started working on it back in 2013 or so when it saw it was viable for ARM architecture.

So around 2015-2016, we should've had Intel 15W APUs built on 7nm nodes, with a 2+4 design. Where they would have (i7-6600u) Skylake and Cherry Trail (x7-8750) meshed together. This would've made them more competitive against the upcoming Ryzen architectures. Even Apple wouldn't have made the leap as soon as they did. Basically it would've bought Intel more wiggle room and time to implement their new architecture (P/Very Large cores), which should have been a Server-First approach. And it would ensure Microsoft puts the work in, to have these hybrid computing supported properly in software. Especially when trying to implement it from laptops to desktops.

Now?

It's a mixture, and I actually think the Ryzen 6000 approach is better. And it pales in comparison to macOS and the M1, M1P, and M1X chips. Whilst, the server market is sliding towards AMD, it looks like it might be overtaken by ARMv9 solutions in the next 5+ years.

Wereweeb - Friday, February 18, 2022 - link

It's nonsense to think that hybrid cores are just for perf/watt. They're overall a more efficient architecture.Thanks to Amdahl's Law it's very good to have two-four performance-focused cores to drive the main thread of the main process(es). But the rest should continue to be PPA-balanced cores - and today the Atom-derived E-cores appear to have better PPA.

Only the ARM equivalent of "Little cores", like the Cortex-A55, belong only on mobile devices. And that's because they're optimized mostly for power. Intel only has X1-like and A78-like cores (I.e. performance-focused and PPA-balanced) so they're already doing their job correctly.

And yes, AMD should indeed split their core designs into a performance-focused core

Wereweeb - Friday, February 18, 2022 - link

(continuation) and a PPA-focused core. That would allow them to boost the single-thread performance to better handle the main threads of processes, while not losing sight of the need to balance the PPA of the rest of the processor.nandnandnand - Friday, February 18, 2022 - link

And it would allow AMD to take advantage of the optimizations being done for Intel. x86 games and applications will just "know" what to do with heterogenous microarchitectures after a while.Kangal - Sunday, February 20, 2022 - link

First of all, you misunderstood what I wrote.I didn't insinuate that Intel's E-cores are good/bad. I wrote that the combinations of P+E is bad for server duties (ie Hybrid Processing). Having a setup that is Homogeneous Processing makes much more sense for servers, and even ARM figured this out early. It fixes a lot of bugs, issues, and security flaws you may have on the software side... especially knowing that you're catering to multiple tasks and multiple users. And to add to this, where Hybrid Processing is great for computing where energy is a limited quantity, you don't really have this issue when it is connected to the grid. I'm not even talking about thermals, but just the access to electricity.

...now a little bit of background:

Intel has been big about recycling their cores.

From the primitive Pentiums, to more advanced Pentiums, to rebranded Celeron cores, and further miniaturised as the Atom cores. These are analogous to "very small" Cortex A53/A55/A510 cores. I think Intel has finally put that architecture out to retirement.

I think their early Core2, evolved into Core-i, and then to Core-i Sandybridge, and then morphed further for Core-i Skylake. The subsequent iterations have been a refresh on the Core-i Skylake architecture. These are analogous to "medium" Cortex A78/A710 cores. I read that it was this microarchitecture which was adopted by Intel, and then further miniaturised, which resulted in the new Intel E-cores. These E-cores are more analogous "small" Cortex A73 cores. Based on that analysis/rumour, I don't see too much improvements coming to them in the future.

Intel's latest "very large" cores are huge. The new P-cores are based on an entirely new microarchitecture. So it's understandable that they won't be too optimised, and will be leaving both performance and efficiency on the table. In subsequent evolutions it should catch up. That's been the historical precedent.

...that was a mouthful, but needed to be said first...

So with that all in context, we are in the transition phase at the moment. There's the current products of Intel servers based on their old cores (Skylake-variant), upcoming servers based on a large array of E-cores, and the premium servers using a smaller array of large P-cores. The market will still be dominated by AMD, who's "large cores" are more analogous to the Cortex-X1/X2, and they will offer a better balance between the options. In time, you will find Intel throws more money, time, effort at evolving their P-cores their bread and butter. And these advances will catch-up or surpass their solutions using E-cores, that much is obvious.

It is likely that the server market will get busy, and most or at least the lower-level stuff, will be lost to solutions built on ARM v9. So the Intel E-core servers will become obscure, and likely phased out by Intel themselves. AMD will be fighting for the top crown with their next-gen processors (Zen 4/5) using newer microarchitecture and techniques like 3D-Cache. Intel may still be able to grasp the top-end premium server market using new-generations of their P-cores. So that's what the future is shaping up to be. But forget about a combined Hybrid Processing server either from ARM, Intel, or AMD.... those will be designed for portable devices like outlined above.

GeoffreyA - Sunday, February 20, 2022 - link

"I think their early Core2, evolved into Core-i, and then to Core-i Sandybridge, and then morphed further for Core-i Skylake."Depending on how one looks at it, the current P cores (or Golden Cove) are in an unbroken descent from the P6 microarchitecture, structures being widened and bits and pieces added over the years. Sandy Bridge, while still under this line, had some notable alterations, such as the physical register file and micro-op cache. Indeed, SB seems to have laid down how a core ought to be designed; and since then, Skylake, Sunny, and Golden Cove haven't done anything new except making everything wider.

The E-cores descend from Atom, which was an in-order design reminiscent of the P5 Pentium, with some modernisations. SMT, SSE, higher clocks, etc. Along the way, they've implemented out-of-order execution and gradually built up the strength of these cores, till, as of Gracemont, they're on par or faster than Skylake while using less power. People laugh at this idea but I believe that this lineage will someday replace the P branch. (Or perhaps an ARM or RISC-V design will supersede both.)

Qasar - Sunday, February 20, 2022 - link

" The new P-cores are based on an entirely new micro architecture "i doubt that, IF they were an entirely new micro architecture, would they not be Gen 1, and not Gen 12 ?

Jp7188 - Thursday, February 24, 2022 - link

I don't disagree with you, but I do disagree that Intel's motive for hybrid was efficiency. They had to do it to compete. I have a 5950x and a 12900k both set to unlimited power, both on the same central water loop. In one of my workloads the 5950x uses 123watts and hover around 55C; the 12900k in the same workload uses 317watts and is constantly riding the thermal throttle at 100C.AMD is already waaaaay more efficient without moving to hybrid. Why should they bother with the complexity?

mode_13h - Thursday, February 24, 2022 - link

> I do disagree that Intel's motive for hybrid was efficiency.In the case of desktops, the benefit of the E-cores isn't power-efficiency, but rather area-efficiency. In the same area as 2 P-cores, Intel added 8 E-cores. Given a roughly 2:1 ratio in P-to-E performance, this should yield the performance of a 12 P-core chip at the area (i.e. price) of only 10 P-cores.

Also, if you look at the marginal power added by those E-cores, I do think there's a good case to be made that they burn less power than 4 P-cores would.

mode_13h - Thursday, February 24, 2022 - link

TLDR; it's something Intel did to provide better perf/$, if not also perf/W.