Revisiting SHIELD Tablet: Gaming Battery Life and Temperatures

by Joshua Ho & Andrei Frumusanu on August 8, 2014 8:00 AM EST

While the original SHIELD Tablet review hit most of the critical points in the review, there wasn't enough time to investigate everything. One of the areas where there wasn't enough data was gaming battery life. While the two hour figure gave a good idea of what to expect in terms of the lower bound for battery life, it didn't give a realistic amount of time for battery life

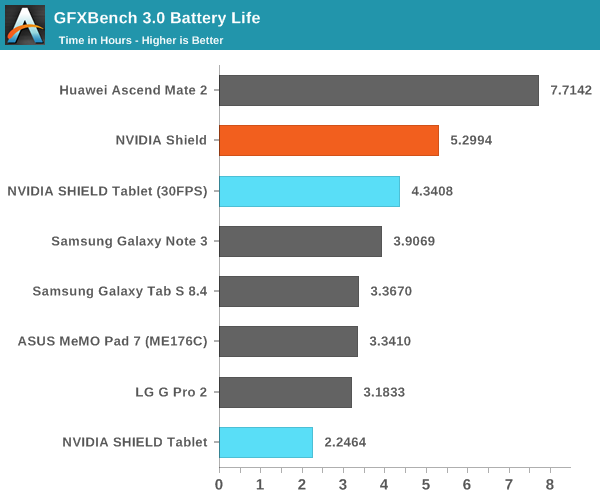

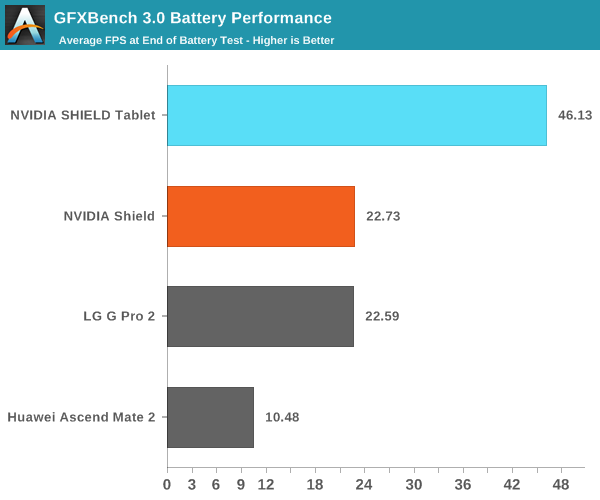

One of the first issues that I attempted to tackle after the review was battery life performance in our T-Rex rundown when capping FPS to ~30, which was still enough to exceed the competition in performance, and avoid any chance of throttling. This also gives a much better idea of real world battery life, as most games shouldn't come close to stressing the Kepler GPU in Tegra K1.

By capping T-Rex to 30 FPS, the SHIELD Tablet actually comes quite close to the battery life delivered by SHIELD Portable with significantly more performance. The SHIELD Portable also needed a larger 28.8 WHr battery and a smaller, lower power 5" display in order to achieve its extra runtime. It's clear that the new Kepler GPU architecture, improved CPU, and 28HPm process are enabling much better experiences compared to what we see on SHIELD Portable with Tegra 4.

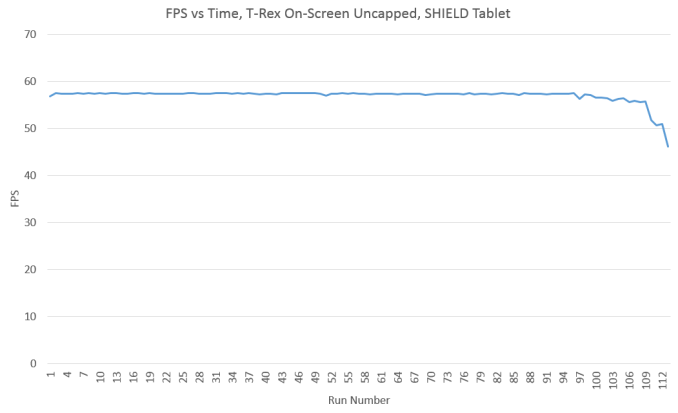

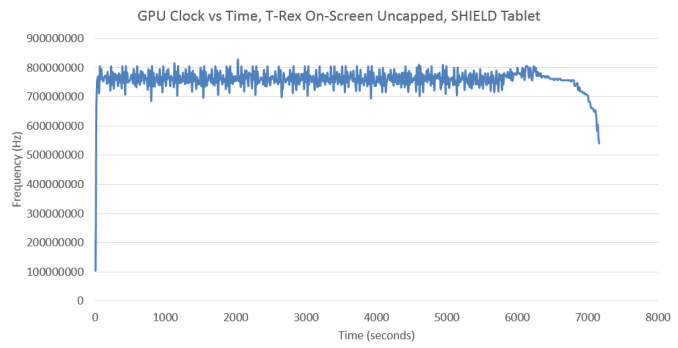

The other aspect that I wanted to revisit were temperatures, I mentioned that I noticed skin temperatures were high, but I didn't know what they really were. In order to get a better idea temperatures in the device, Andrei managed to make a tool to log such data from on-device temperature sensors. Of course, the most interesting data is always generated at the extremes, so we'll look at an uncapped T-Rex rundown first.

In order to understand the results I'm about to show, this graph is critical. As ambient temperatures were lower (15-18C vs 20-24C) when I ran this variant of the test, we don't see much throttling until the end of the test where there's a dramatic drop to 46 FPS.

As we can see, the GPU clock graph almost perfectly mirrors the downward trend that is presented in the FPS graph. It's also notable that relatively little time is spent at the full 852 MHz that the graphics processor is capable of. The vast majority of the time is spent at around 750 MHz, which suggests that this test isn't pushing the GPU to the limit, although looking at the FPS graph would also confirm this as it's sitting quite close to 60 FPS throughout the run.

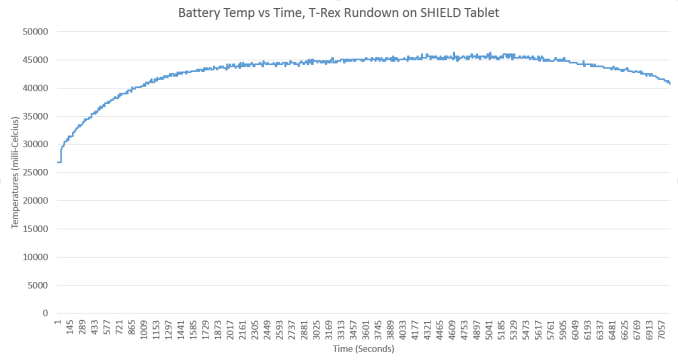

While I was unable to quantify skin temperature measurements in the initial review, battery temperature is often quite close to skin temperature. Here, we can see that battery temperatures (which is usually the charger IC temperature) hit a maximum of around 45C as I predicted. While this is perfectly acceptable to the touch, I was definitely concerned about how hot the SoC would get under such conditions.

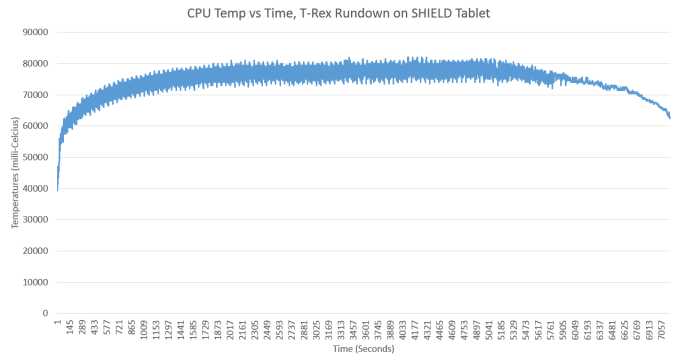

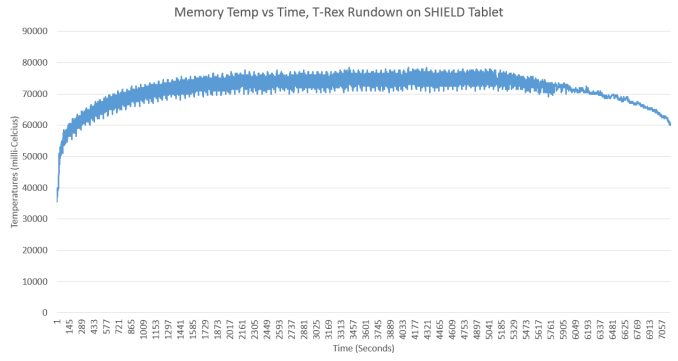

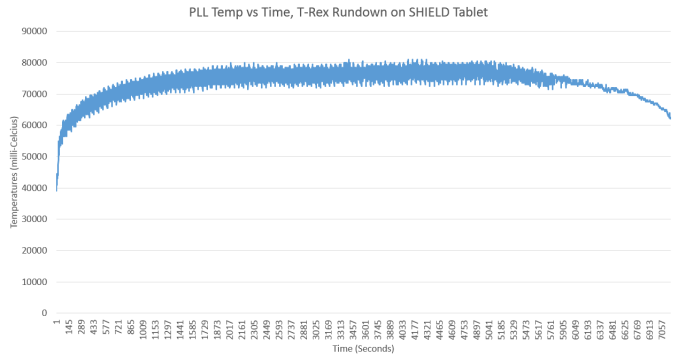

Internally, it seems that the temperatures are much higher than the 45C battery temperature might suggest. We see max temperatures of around 85C, which is edging quite close to the maximum safe temperature for most CMOS logic. The RAM is also quite close to maximum safe temperatures. It definitely seems that NVIDIA is pushing their SoC to the limit here, and such temperatures would be seriously concerning in a desktop PC, although not out of line for a laptop.

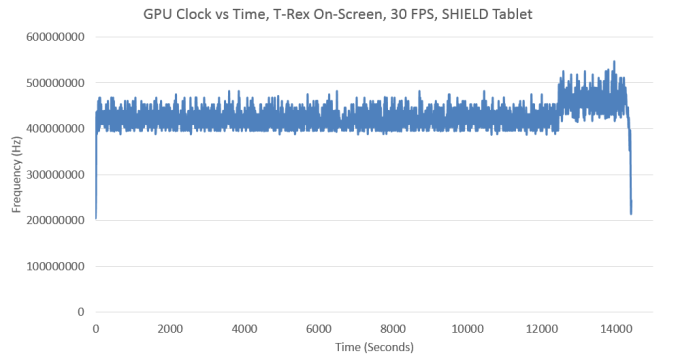

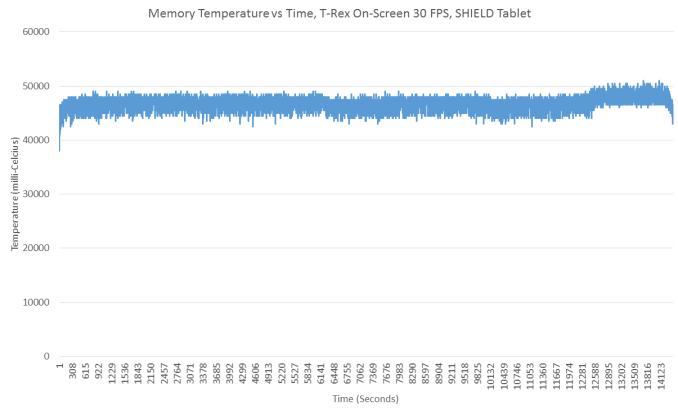

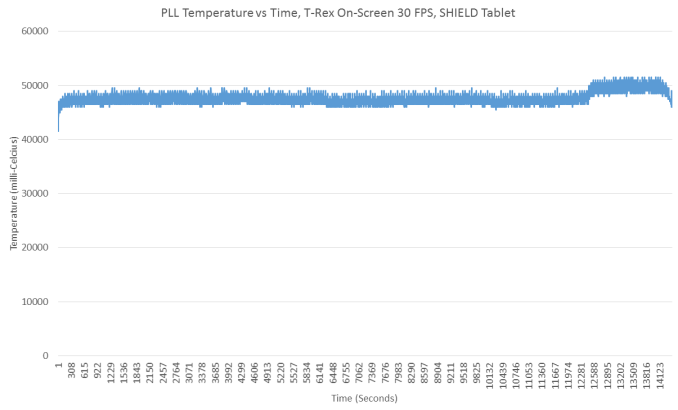

On the other hand, it's a bit unrealistic for games not developed for Tegra K1 to push the GPU to the limit like this. Keeping this in mind, I did another run with the maximum frame rate capped to 30 FPS. As even the end of run FPS is over 30 FPS, showing the FPS vs time graph would be rather boring as it's a completely flat line pegged at 30 FPS. Therefore, it'll be much more interesting to start with the other data I've gathered.

As one can see, despite performance near that of the Adreno 330, the GPU in Tegra K1 sits at around 450 MHz for the majority of this test. There is a bit of a bump towards the end, but that may be due to the low battery overlay as this test was unattended until the end.

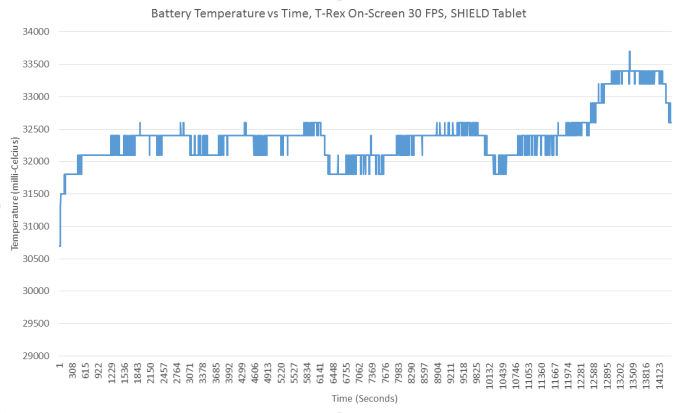

In addition to the low GPU clocks, we see that the skin temperatures never exceed 34C, which is completely acceptable. This bodes especially well for the internals, which should be much cooler in comparison to previous runs.

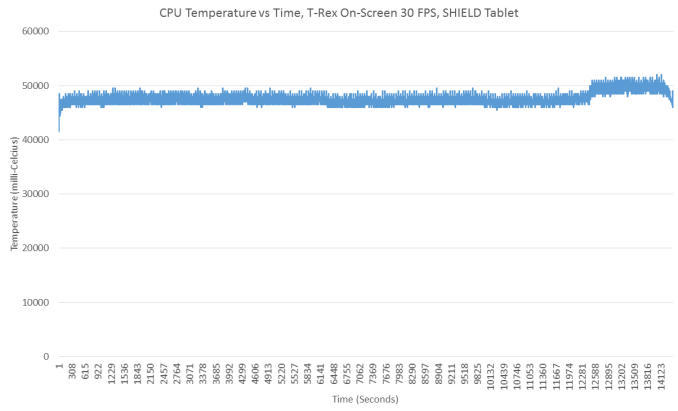

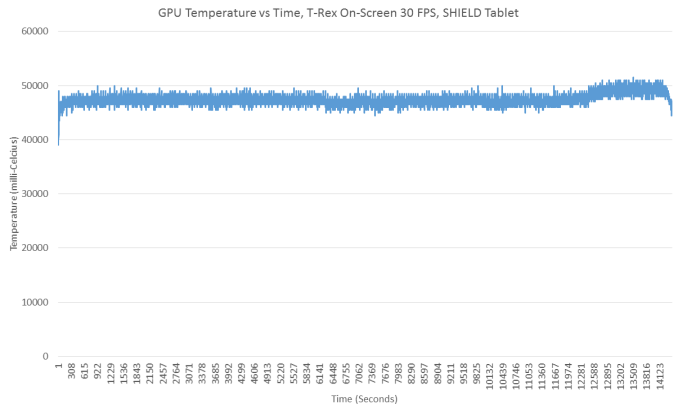

Here, we see surprisingly low temperatures. Peak temperatures are around 50C and no higher, with no real chance of throttling. Overall, this seems to bode quite well for Tegra K1, even if the peak temperatures are a bit concerning. After all, Tegra K1 delivers immense amounts of performance when necessary, but manages to sustain low temperatures and long battery life when it it isn't. More importantly, it's important to keep in mind that the Kepler GPU in Tegra K1 was designed for desktop and laptop use first. The Maxwell GPU in NVIDIA's Erista SoC is the first to be designed to target mobile devices first. That's when things get really interesting.

39 Comments

View All Comments

gonchuki - Friday, August 8, 2014 - link

AMD already has what you want, the Mullins APU is better than an Atom at the CPU side, and similar to the K1 at the GPU side (when compared at a 4.5w TDP, as the Shield has a higher TDP adn gets as hot as pancakes).They just didn't get any design wins yet, that's the sad part of the story.

nathanddrews - Friday, August 8, 2014 - link

Please link me ASAP!kyuu - Sunday, August 10, 2014 - link

If you look at the full Shield Tablet review, you'll see that the results from the "AMD Mullins Discovery Tablet" are the only ones from a mobile SoC to approach the K1's GPU results. But K1 has a TDP of 5-8W (11W at full load), as opposed to Mullin's 4.5W TDP. So given that, I'd say Mullins is roughly equal with the K1 on the GPU front when you look at power/performance.Of course, Mullins has the advantage that it's an x86 design, and so the GPU performance won't go largely to waste like it does with the K1 (though at least you can use it for emulators on Android). The disadvantage is, of course, that AMD doesn't seem to have any design wins, so you can't actually buy a Mullins-equipped tablet.

That last point makes me rather irritated, since I'd love nothing more than to have a tablet of Shield's caliber/price (though preferably ~10" and with a better screen) powered by Mullins and running Win8.1.

ToTTenTranz - Friday, August 8, 2014 - link

If it's in nVidia's interests to sell GPU IP right now (as they stated last year), why wouldn't they sell it to Intel?Sure, there was some bad blood during the QPI class action suit, but that was over 3 years ago and hard feelings don't tend to take place over good business decisions in such large companies.

Truth be told, nVidia is in the best position to offer the best possible GPU IP for Intel's SoCs, since PowerVR seems so reluctant to offer GPUs with full DX11 compliance.

nathanddrews - Friday, August 8, 2014 - link

Let alone DX12...It could probably go both ways, couldn't it? NVIDIA could license X86 from Intel or Intel could use NVIDIA's GPU, if it makes business sense, that is.

frenchy_2001 - Friday, August 8, 2014 - link

Intel will NOT license x86. This is their "crown jewels" and they battled hard enough to limit who could use it after an initial easy share (to start the architecture).If intel could put their Pride aside and use K1 in their Atoms or even laptop processors, this would be a killer.

Don't get me wrong, Intel HD cores have grown by leaps and bonds, but they both lag in hardware perfs (they have a much lower silicon area too) and particularly driver development.

If Intel wanted to kill AMD (hint: they don't) at their own game (APU), they would license Kepler and integrate it.

Imagine an Atom or even Haswell/Broadweel (or beyond) with an NV integrated?

TheinsanegamerN - Friday, August 8, 2014 - link

dream laptop right there. dual core i5 with k1's gpu, maybe running at higher clockspeed. integrated, simple, but decently powerful without breaking the bank and good battery life and drivers to boot.ams23 - Friday, August 8, 2014 - link

Josh, isn't there a feature on Shield tablet where CPU/GPU clock operating frequencies get reduced when the battery life indicator is < ~ 20%? After all, it takes more than 110 looped runs (!) of the GFXBench 3.0 T-Rex Onscreen test to see any significant reduction in performance, and during that time, the peak frequencies and temps are pretty consistent and well maintained.Note that if you look at the actual GFXBench 3.0 T-Rex Onscreen "long term performance" scores (which is likely based on something closer to ~ 30 looped runs of this benchmark), the long term performance is consistently very high at ~ 56fps, which indicates very little throttling during the test: http://gfxbench.com/subtest_results_of_device.jsp?...

JoshHo - Friday, August 8, 2014 - link

To my knowledge there isn't such a mechanism active. It may be that there are lower power draw limits as the battery approaches ~3.5V in order to prevent tripping the failsafe mechanisms in the battery.ams23 - Friday, August 8, 2014 - link

If I recall correctly, it was mentioned somewhere on the GeForce forums (in the Shield section) by an NV rep that CPU/GPU frequencies get reduced or limited once the battery life indicator starts to get below ~ 20%.Thank you for the extended testing!