Revisiting SHIELD Tablet: Gaming Battery Life and Temperatures

by Joshua Ho & Andrei Frumusanu on August 8, 2014 8:00 AM EST

While the original SHIELD Tablet review hit most of the critical points in the review, there wasn't enough time to investigate everything. One of the areas where there wasn't enough data was gaming battery life. While the two hour figure gave a good idea of what to expect in terms of the lower bound for battery life, it didn't give a realistic amount of time for battery life

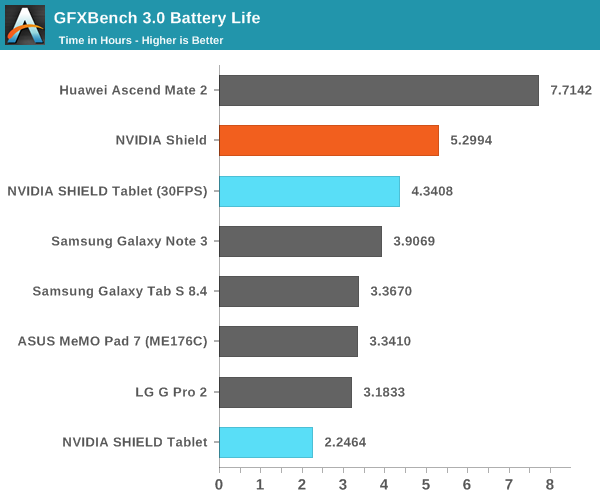

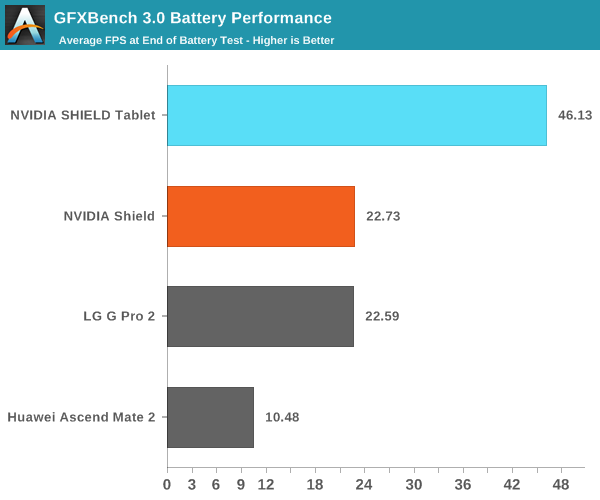

One of the first issues that I attempted to tackle after the review was battery life performance in our T-Rex rundown when capping FPS to ~30, which was still enough to exceed the competition in performance, and avoid any chance of throttling. This also gives a much better idea of real world battery life, as most games shouldn't come close to stressing the Kepler GPU in Tegra K1.

By capping T-Rex to 30 FPS, the SHIELD Tablet actually comes quite close to the battery life delivered by SHIELD Portable with significantly more performance. The SHIELD Portable also needed a larger 28.8 WHr battery and a smaller, lower power 5" display in order to achieve its extra runtime. It's clear that the new Kepler GPU architecture, improved CPU, and 28HPm process are enabling much better experiences compared to what we see on SHIELD Portable with Tegra 4.

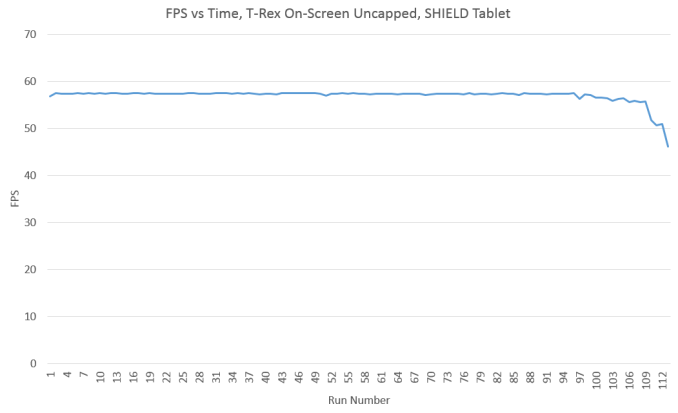

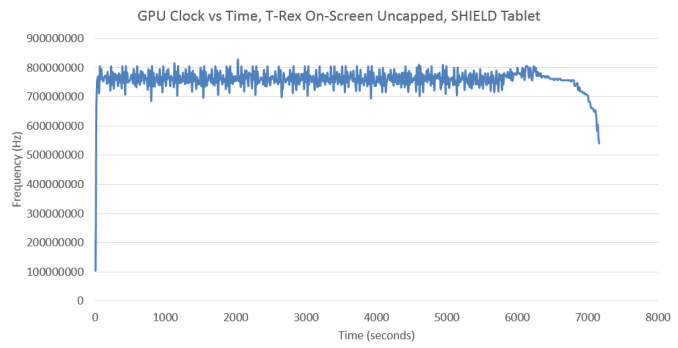

The other aspect that I wanted to revisit were temperatures, I mentioned that I noticed skin temperatures were high, but I didn't know what they really were. In order to get a better idea temperatures in the device, Andrei managed to make a tool to log such data from on-device temperature sensors. Of course, the most interesting data is always generated at the extremes, so we'll look at an uncapped T-Rex rundown first.

In order to understand the results I'm about to show, this graph is critical. As ambient temperatures were lower (15-18C vs 20-24C) when I ran this variant of the test, we don't see much throttling until the end of the test where there's a dramatic drop to 46 FPS.

As we can see, the GPU clock graph almost perfectly mirrors the downward trend that is presented in the FPS graph. It's also notable that relatively little time is spent at the full 852 MHz that the graphics processor is capable of. The vast majority of the time is spent at around 750 MHz, which suggests that this test isn't pushing the GPU to the limit, although looking at the FPS graph would also confirm this as it's sitting quite close to 60 FPS throughout the run.

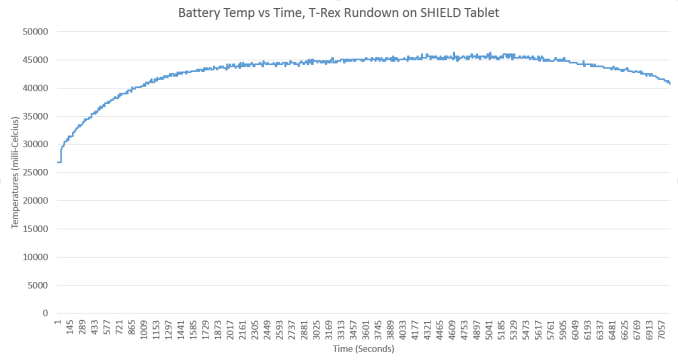

While I was unable to quantify skin temperature measurements in the initial review, battery temperature is often quite close to skin temperature. Here, we can see that battery temperatures (which is usually the charger IC temperature) hit a maximum of around 45C as I predicted. While this is perfectly acceptable to the touch, I was definitely concerned about how hot the SoC would get under such conditions.

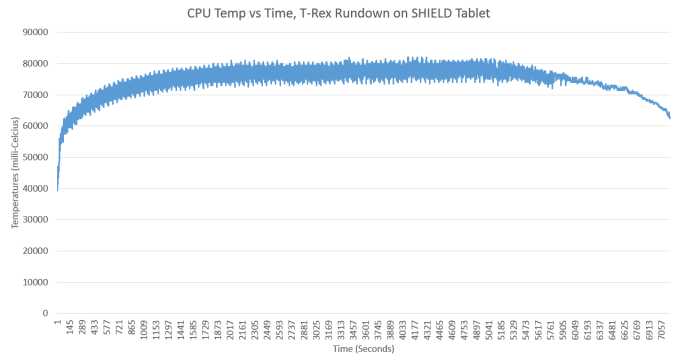

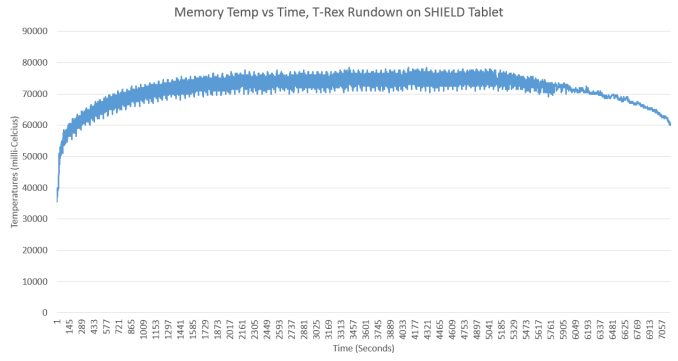

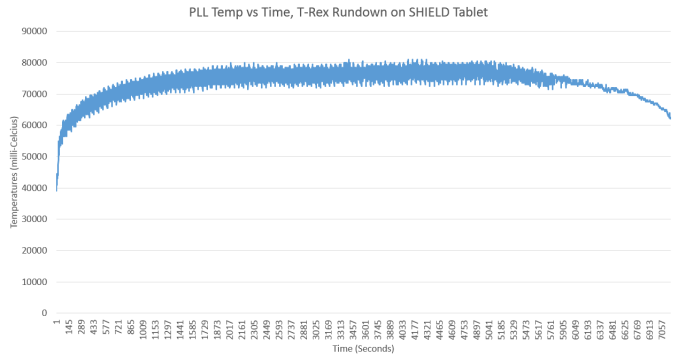

Internally, it seems that the temperatures are much higher than the 45C battery temperature might suggest. We see max temperatures of around 85C, which is edging quite close to the maximum safe temperature for most CMOS logic. The RAM is also quite close to maximum safe temperatures. It definitely seems that NVIDIA is pushing their SoC to the limit here, and such temperatures would be seriously concerning in a desktop PC, although not out of line for a laptop.

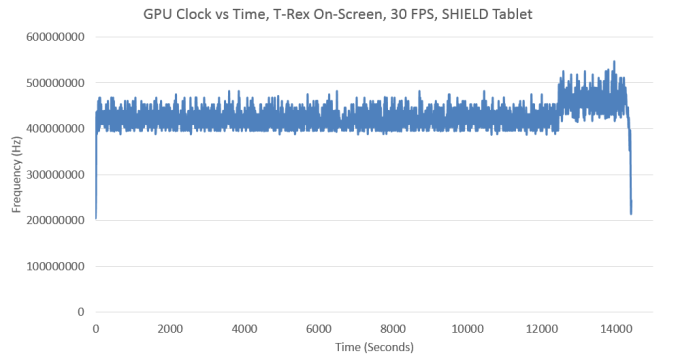

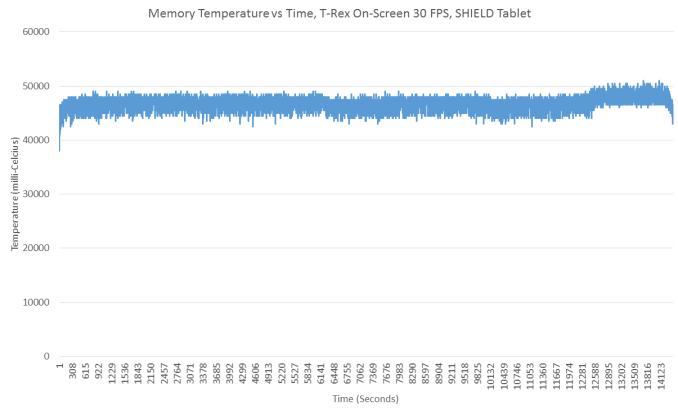

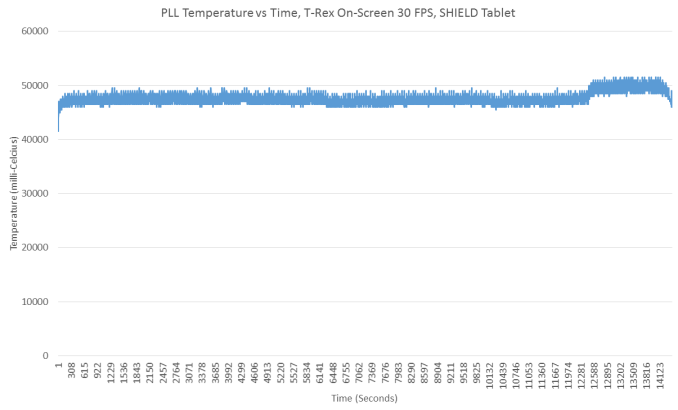

On the other hand, it's a bit unrealistic for games not developed for Tegra K1 to push the GPU to the limit like this. Keeping this in mind, I did another run with the maximum frame rate capped to 30 FPS. As even the end of run FPS is over 30 FPS, showing the FPS vs time graph would be rather boring as it's a completely flat line pegged at 30 FPS. Therefore, it'll be much more interesting to start with the other data I've gathered.

As one can see, despite performance near that of the Adreno 330, the GPU in Tegra K1 sits at around 450 MHz for the majority of this test. There is a bit of a bump towards the end, but that may be due to the low battery overlay as this test was unattended until the end.

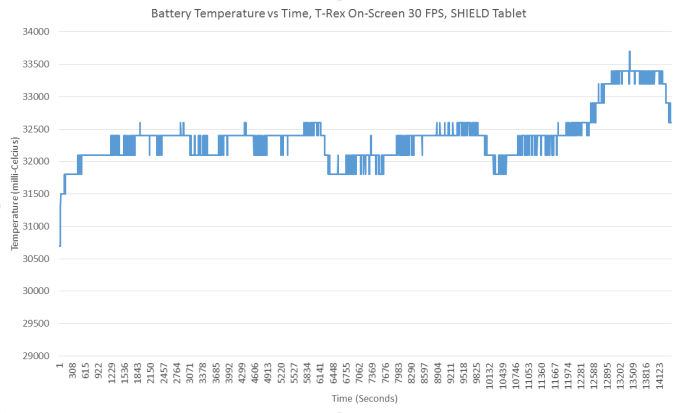

In addition to the low GPU clocks, we see that the skin temperatures never exceed 34C, which is completely acceptable. This bodes especially well for the internals, which should be much cooler in comparison to previous runs.

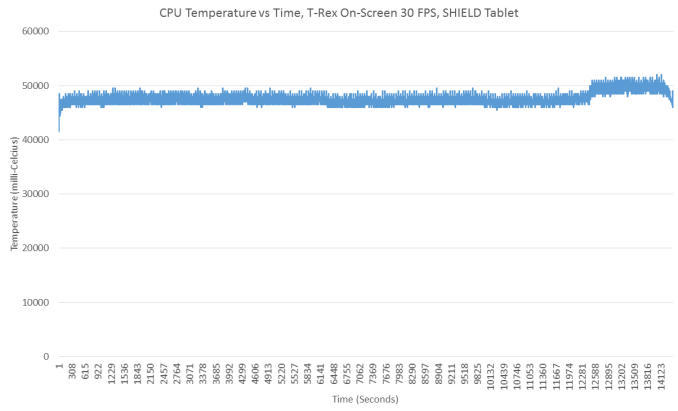

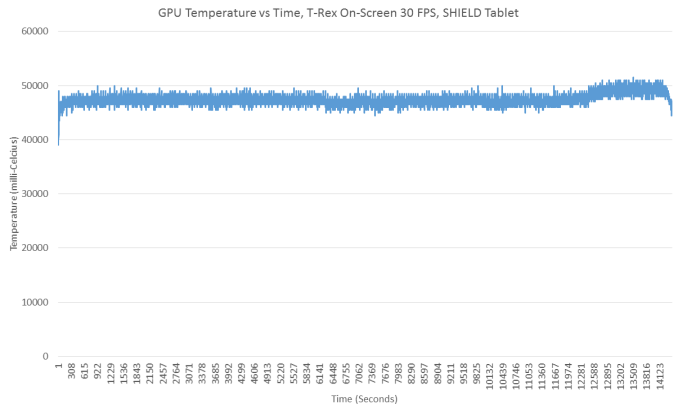

Here, we see surprisingly low temperatures. Peak temperatures are around 50C and no higher, with no real chance of throttling. Overall, this seems to bode quite well for Tegra K1, even if the peak temperatures are a bit concerning. After all, Tegra K1 delivers immense amounts of performance when necessary, but manages to sustain low temperatures and long battery life when it it isn't. More importantly, it's important to keep in mind that the Kepler GPU in Tegra K1 was designed for desktop and laptop use first. The Maxwell GPU in NVIDIA's Erista SoC is the first to be designed to target mobile devices first. That's when things get really interesting.

39 Comments

View All Comments

UpSpin - Friday, August 8, 2014 - link

i don't understand why you talk about the battery temperature if we talk about the temperature of the die in the SoC? Depending on the thermal design both can be nearly independent of each other.Temperature in every region has an influence of the leakage current, so I don't understand why you bring up this topic. Especially because you probably have no idea in which amount it has an influence, so we can just guess. The same with the lifetime.

Just for clarification:

We talk about 'the maximum safe temperature for most CMOS logic.' not more not less.

And according to lots of datasheets of microcontrollers the safe temperature is up to +125C.

Of course, I don't know it for HKMG.

JoshHo - Friday, August 8, 2014 - link

It's possible to thermally isolate battery and the board, but in most devices this is not done as both parts tend to share a metal midframe to aid in heat dissipation. As a result it's not possible to simply ignore battery temperature and focus on SoC temperature. It's likely that both battery and SoC at the maximum temperatures observed in this test are at the highest safe level.Maximum safe temperature in most datasheets for something like a CPU or GPU would be the point where the device is shut off and/or reset, not a point to throttle to. While it's fully acceptable to run something like a CPU up to 100C continuously with a TjMax of 105C, the MTBF will be noticeably shorter than if the same CPU was run at 70C or less.

Exceeding TjMax is far from the only way to damage an IC with heat. Thermal cycling from high to low temperatures is also a concern, and other components on the board will have reduced lifetime from high temperatures.

I have no doubt NVIDIA has carefully throttled this SoC and ensured that the MTBF of this device is within acceptable range, but it is still quite a high temperature.

Beerfloat - Friday, August 8, 2014 - link

Josh,From this article:

http://www.anandtech.com/show/7457/the-radeon-r9-2...

"The 95C maximum operating temperature that most 28nm devices operate under is well understood by engineering teams, along with the impact to longevity, power consumption, and clockspeeds when operating both far from it and near it. In other words, there’s nothing inherently wrong with letting an ASIC go up to 95C so long as it’s appropriately planned for."

"AMD no longer needs to keep temperatures below 95C in order to avoid losing significant amounts of performance to leakage. From a performance perspective it has become “safe” to operate at 95C."

When talking about 85C you stated "such temperatures would be seriously concerning in a desktop PC". Are you saying Ryan is likely too optimistic about that desktop device's 95C lifespan?

JoshHo - Friday, August 8, 2014 - link

For a desktop it's usually fully possible to keep temperatures well below 80C by throwing more surface area and CFM at the problem. The same page also cites a cost to longevity, and when upgrade cycles for desktop parts can greatly exceed the warranty period, allowing ~95C core temperature can be much more expensive than louder fan noise or a custom cooling solution.GC2:CS - Friday, August 8, 2014 - link

Looking at all of this.We got a chip that runs fast, but also consumes insane amounts of power for a mobile device. It runs so hot that it has to throttle (for whatever reason), even though it happily runs on potentially damaging temperatures, even with an integrated magnesium heat-spreader, even when running an "uncapped" test that is capped by the display refresh rate at a performance noticeably bellow the off-screen tests.

So hot it can't be put into a phone (1440p phones would be happy).

Only when running at 30FPS and losing any significant advantage over the competition, we could say that the battery life falls into tablet class. So whats the difference between this tegra and an adreno 330 that gets an 7W power budget, and a heatspreader ? Where are the comparisons ? How does the iPad mini with Retina display compares for example ?

Everything I see is a chip with far higher maximum power draw than the competition and thats all.

ams23 - Friday, August 8, 2014 - link

If you look at the actual testing, Shield tablet is able to maintain steady temps and steady performance for > 110 (!) continuous GFXBench benchmark loops even in the max performance mode, which is pretty amazing for an 8" thin and fanless tablet. So the end of test throttling does not appear to be related to heat, but is most likely due to the very low battery % capacity that is left at the end of the test which triggers lower CPU/GPU clock operating frequencies.At 30fps framerate cap, the performance of Shield tablet in the T-Rex Onscreen test is roughly 1.5 higher than iPad Air. With an uncapped framerate, the performance of Shield tablet in the T-Rex Onscreen test is > 2.5x higher than iPad Air.

sonicmerlin - Friday, August 8, 2014 - link

Wth? I check this site almost every day and I never saw the shield test review. Did it show up on the front page?The Von Matrices - Friday, August 8, 2014 - link

You need to look further down on the front page. Sometimes two or more articles get posted on the same day, in which case the more recent article gets the large image while the second article gets the small image below it making it seem like that article is very old when in fact it could have been posted just a second before the top article.przemo_li - Saturday, August 9, 2014 - link

How do You test FPS at the end of test time?How can You make sure that frequencies of CPU/GPU aren't skewed by the OS (for preserving battery life)?

In other words how can You separate GPU performance from OS performance?