CES 2016 Roundup: Total Editor Recall

by AnandTech Staff on January 26, 2016 11:00 AM EST- Posted in

- Trade Shows

- CES 2016

Another year, another Consumer Electronics Show - actually, it seems that it's official name is now just CES. Nonetheless, it ends up being one of the biggest shows of the year for technology, if not the biggest. Covering PC to smartphone to TV to IoT to the home and the car, CES promises to have it all. It's just a shame that the week involves so many press events and 7am-midnight meeting schedules it can be difficult to take it all in, especially with 170,000 people attending. With the best of interests, we did take some information away and we asked each editor to describe the most memorable bits of their show.

Senior PC Editor, Ian Cutress

CES is a slightly different show for me compared to the other editors - apart from flying in from Europe which makes the event a couple of days longer, it actually isn't my priority show, and that honor goes to Computex in June. Despite this, and despite companies like ASUS cancelling their press events because everything they would have announced would come after Computex anyway, CES this year felt like my busiest event ever. Overriding announcements like AMD's Polaris is a great way to get the adrenaline going, but a couple of other announcements were super exciting too.

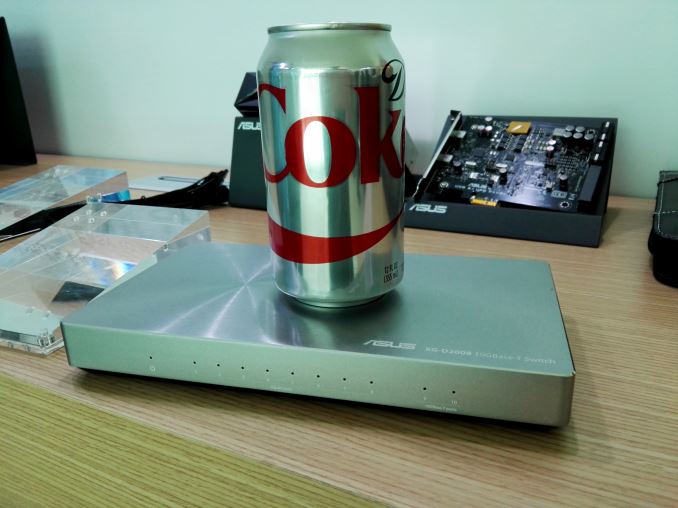

First up I want to loop back to ASUS. Despite the lack of a press event, their PR mail shot just before the event mentioned a 10G Ethernet switch being launched. At the time I mistook the announcement for a 10 port switch, when the device is actually a 2x10G + 8x1G, but even in that configuration the price of $300 is hard to ignore. Moving a workspace to 10G, especially 10GBase-T, means getting a capable switch, which at a minimum costs $800 at the moment for an 8-port number. So bringing that down to something more palatable is a good thing.

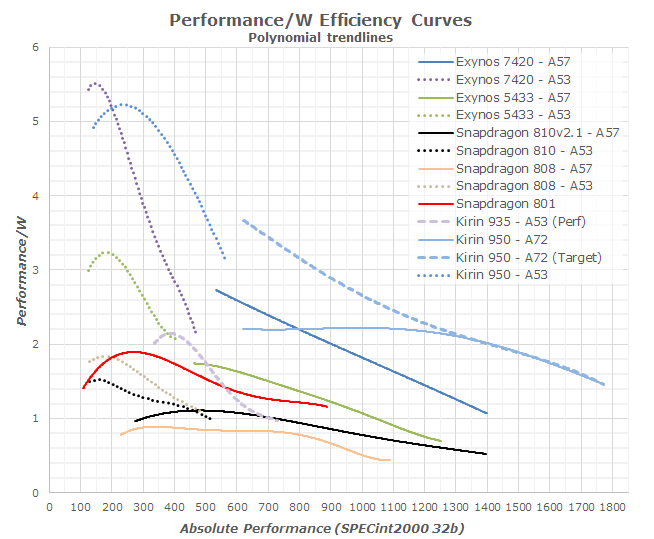

Having been to China to visit Huawei at the end of last year and talking about the Kirin 950 meant it was good to see the Mate 8 launched and Andrei's launch day review. ARM's A72 microarchitecture, the thinner, lighter and more powerful upgrade to A57, was in the flesh and on 16nm using TSMCs 16FF+ node. When we spoke with Huawei and HiSilicon before the launch, they were promoting some impressive numbers especially on power efficiency, which Andrei tested and confirmed. Whereas 2015 was a relative dud for mobile on Android, 2016 should breathe a bit of life into an ever expanding market with the introduction of A72 and 16/14nm.

Speaking of things that should come to life in 2016, Virtual Reality should be on the rise and the constant talk about VR was ever present at the show. Not only the kits (I had another go at the HTC Vive with iBuyPower while HTC filmed it) but also the hardware that powers them, with AMD's Raja Koduri stating that true VR requires 16K per eye at 240 Hz. While we're far away from that right now, we saw new hardware gracing the scene such as EVGA's VR Edition that provisioned for all the USB ports needed, or full on systems with MSI's Vortex. The Vortex was interesting by virtue of the fact that it sounds essentially like the Mac Pro with a single CPU and two GPUs in a triangular configuration sharing a single heatsink and a single fan to cool them in nothing bigger than a small wastepaper bin. While the design is purely aimed at the gaming crowd, a professional look paired with a Core i7 and two GTX 980 Ti graphics cards (or any upcoming 14/16nm cards), plus the Thunderbolt 3 ports it has, would make it a mini powerhouse for gaming and VR.

I got super excited for a couple of other things, but perhaps not for normal reasons. Firstly was storage - Mushkin showed us an early PVT board of their new 2TB drive, but said it was a precursor to a 9mm 4TB model coming in at $500. That pushes pricing down to $0.122 per GB, although in that configuration due to some RAID controllers and splitting it takes a hit on IOPS and power consumption, but nonetheless it seemed a good way for cheap SSD style storage.

(Edit 2016-01-26: Mushkin has clarified their comments to us: they are aiming for below $0.25/GB, which puts the drive south of $1000. Saying $500 is more of an end goal several years down the line for this sort of capacity.)

The other part was Cooler Master's new MasterWatt power supply with an integrated ARM controller and Bluetooth. This gave the user, either via internal USB or on the smartphone app, access to the power consumption metrics, rail loading and recording functionality that I've badly wanted in a power supply for a while. With the right command line tools and recording, I ideally want to get several of these to power my next generation of testbeds and get a metric ton more data for our reviews. I've pitched several ideas to CM about how we can use them in the future and they seem very willing to work towards a common goal, so watch this space.

My big show of the year is going to be Computex in early June, when a number of the standard tech companies have already stated they have large plans for releases. Roll on 2016...!

44 Comments

View All Comments

JonnyDough - Wednesday, January 27, 2016 - link

"With these things in mind, it does make sense that Samsung is pushing in a different direction. When looking at the TV market, I don’t see OLED as becoming a complete successor to LCD, while I do expect it to do so in the mobile space. TVs often have static parts of the interface, and issues like burn in and emitter aging will be difficult to control."Wouldn't that be opposite? Phones and tablets are often used in uncontrolled environments, and have lock screens and apps that create static impressions on a display as much as any tv in my opinion. I think OLEDs could definitely penetrate the television market, and I think as a result of either they will trickle over into other markets due to cost. Unless a truly viable alternative to OLEDs can overtake these spaces, I think that continual refinements in OLED help it prove to be a constantly used and somewhat static technology. Robots are moving more and more towards organics as well - so it would make sense that in the future we borrow more and more from nature as we come to understand it.

Brandon Chester - Wednesday, January 27, 2016 - link

Relative to TVs you keep your phone screen on for a comparatively short period of time. Aging is actually less of an issue in the mobile market. Aging is the bigger issue with TV adoption, with burn in being a secondary thing which could become a larger problem with the adoption of TV boxes that get left on with a very static UI.JonnyDough - Thursday, January 28, 2016 - link

You brought up some good points. I wonder though how many people have a phablet and watch Netflix or HBO now when on the road in a hotel bed.Kristian Vättö - Thursday, January 28, 2016 - link

I would say the even bigger factor is the fact that TV upgrade cycles are much longer than smartphones. While the average smartphone upgrade cycle is now 2-2.5 years, most people keep their TVs for much longer than that, and expect them to function properly.Mangemongen - Tuesday, February 2, 2016 - link

I'm writing this on my 2008, possibly 2010 Panasonic plasma TV which shows static images for hours every day, and I have seen no permanent burn in. There is merely some slight temporary burn in. Is OLED worse than modern plasmas?JonnyDough - Wednesday, January 27, 2016 - link

What we need are monitors that have a built in GPU slot, since AMD is already helping them to enable other technologies, why not that? Swappable GPUs on a monitor, the monitors already have a PSU built in so why not? Put a more powerful swappable PSU with the monitor, a mobile like GPU, and voila. External plug and play graphics.Klug4Pres - Wednesday, January 27, 2016 - link

"The quality of laptops released at CES were clearly a step ahead of what they have been in the past. In the past quality was secondary to quantity, but with the drop in volume, everyone has had to step up their game."I don't really agree with this. Yes, we have seen some better screens at the premium end, but still in the sub-optimal 16:9 aspect ratio, a format that arrived in laptops mainly just to shave a few bucks off cost.

Everywhere we are seeing quality control issues, poor driver quality, woeful thermal dissipation, a pointless pusuit of ever thinner designs at the expense of keyboard quality, battery life, speaker volume etc., a move to unmaintanable soldered CPUs and RAM.

Prices are low, quality is low, volumes are getting lower. Of course, technology advances in some areas have led to improvements, e.g. Intel's focus on idle power consumption that culminated in Haswell battery-life gains.

rabidpeach - Wednesday, January 27, 2016 - link

yea? 16k per eye? is that real, or him make up numbers to make radeon have something to shoot for in future?boeush - Wednesday, January 27, 2016 - link

There are ~6 million cones (color photoreceptors) per human eye. Each cone perceives only the R, G, or B portion (roughly speaking), making for roughly 2 megapixels per eye. Well, there's much lower resolution in R, so let's say 4 megapixels to be generous.That means 4k, spanning the visual field, already exceeds human specs by a factor of 2, at first blush. Going from 4k to 16k boosts pixel count by a factor of 16, we end up exceeding human photoreceptor count by a factor of 32!

But there's a catch. First, human acuity exceeds the limit of color vision, because we have 20x more rods (monochromatic receptors) than cones, which provide very fine edge and texture information over which the color data from the cones is kind of smeared or interpolated by the brain. Secondly, most photoreceptors are clustered around the fovea, giving very high angular resolution over a small portion of the visual field - but we are able to rapidly move our eyeballs around (saccades), integrating and interpolating the data to stitch and synthesuze together a more detailed view than would be expected from a static analysis of the optics.

In light of all of which, perhaps 16k uniformly covering the entire visual field isn't such overkill after all if the goal is the absolute maximum possible visual fidelity.

Of course, running 16k for each eye at 90+ Hz (never even mind higher framerates) would take a hell of a lot of hardware and power, even by 2020 standards. Not to mention, absolute best visual fidelity would require more detailed geometry, and more accurate physics of light, up to full-blown real-time ray-tracing with detailed materials, caustics, global illumination, and many bounces per ray - something that would require a genuine supercomputer to pull off at the necessary framerates, even given today's state of the art.

So ultimately, its all about diminishing returns, low-hanging fruit, good-enough designs, and balancing costs against benefits. In light of which, probably 16k VR is impractical for the foreseeable future (meaning, the next couple of decades)... Personally, I'd just be happy with a 4k virtual screen, spanning let's say 80% of my visual field, and kept static in real space via accelerometer-based head-tracking (to address motion sickness) with an option to intentionally reposition it when desired - then I wouldn't need any monitors any longer, and would be able to carry my high-res screen with/on me everywhere I go...

BMNify - Wednesday, January 27, 2016 - link

"AMD's Raja Koduri stating that true VR requires 16K per eye at 240 Hz."well according to the bbc r&d scientific investigations found the optimal being close to the 300 fps we were recommending back in 2008 and prove higher frame rates dramatically reduce motion blur which can be particularly disturbing on large modern displays.

it seems optimal to just use the official UHD2 (8k) spec with multi surround sound and the higher real frame rates of 100/150/200/250 fps for high action content as per the existing bbc/nhk papers... no real need to define UHD3 (16k) for near eye/direct retina display