AMD Beema/Mullins Architecture & Performance Preview

by Anand Lal Shimpi on April 29, 2014 12:00 AM ESTNew Turbo Boost

With power in perspective, let’s talk about performance and the lineup. It always made little sense that despite a very competitive microarchitecture, Jaguar both consumed more power and performed worse than Intel’s Silvermont. It turns out that’s more a function of the limited time AMD’s Jaguar team had to bring the design to market. As the basis not only for AMD’s own entry level APUs but also the semi-custom SoCs bids for consoles from Microsoft and Sony, Jaguar had to be done quickly. With Puma+ and its associated SoC designs, AMD could focus more on driving power down and introducing new features, one of which happens to be a very intelligent clock boosting scheme analogous to Intel’s Turbo Boost.

While the bulk of Kabini and Temash silicon ran up to a set maximum frequency, Beema and Mullins SoCs can take advantage of available thermal headroom to increase their maximum frequency for a limited period of time. If we look at the tables below we’ll see this in action:

| Mullins vs. Temash - Frequency Gains | ||||||||

| TDP | Max CPU Frequency | Temash Equivalent | Temash Equivalent (TDP) | Temash Max CPU Frequency | Max Frequency Increase from Mullins | |||

| A10 Micro-6700T | 4.5W | 2.2GHz | A6-1450 | 8W | 1.4GHz | 57% | ||

| A4 Micro-6400T | 4.5W | 1.6GHz | A4-1250 | 9W | 1.0GHz | 60% | ||

| E1 Micro-6200T | 3.95W | 1.4GHz | A4-1200 | 3.9W | 1.0GHz | 40% | ||

AMD no longer reports max non-turbo frequency, unfortunately following in Intel’s footsteps (as well as the rest of the mobile players), but you can assume that they are mostly unchanged from Kabini/Temash. Beema and Mullins can now turbo up to much higher frequencies. In the case of Mullins in particular, since it’s so thermally constrained, the potential upside for frequency scaling is huge.

| Beema vs. Kabini - Frequency Gains | ||||||||

| TDP | Max CPU Frequency | Kabini Equivalent | Kabini Equivalent (TDP) | Kabini Max CPU Frequency | Max Frequency Increase from Beema | |||

| A6-6310 | 15W | 2.4GHz | A6-5200 | 25W | 2.0GHz | 20% | ||

| A4-6210 | 15W | 1.8GHz | A4-5000 | 15W | 1.5GHz | 20% | ||

| E2-6110 | 15W | 1.5GHz | E2-3000/E1-2500 | 15W | 1.65GHz/1.4GHz | -10%/7% | ||

| E1-6010 | 10W | 1.35GHz | E1-2100 | 9W | 1.0GHz | 35% | ||

The frequency gains aren't just limited to the CPU, the 128 GCN cores can also run at higher speeds with Beema and Mullins:

| Mullins vs. Temash - GPU Frequency Gains | ||||||||

| TDP | Max GPU Frequency | Temash Equivalent | Temash Equivalent (TDP) | Temash Max GPU Frequency | Max GPU Frequency Increase from Mullins | |||

| A10 Micro-6700T | 4.5W | 500MHz | A6-1450 | 8W | 400MHz | 25% | ||

| A4 Micro-6400T | 4.5W | 350MHz | A4-1250 | 9W | 300MHz | 16% | ||

| E1 Micro-6200T | 3.95W | 300MHz | A4-1200 | 3.9W | 225MHz | 33% | ||

| Beema vs. Kabini - GPU Frequency Gains | ||||||||

| TDP | Max GPU Frequency | Kabini Equivalent | Kabini Equivalent (TDP) | Kabini Max GPU Frequency | Max GPU Frequency Increase from Beema | |||

| A6-6310 | 15W | 800MHz | A6-5200 | 25W | 600MHz | 33% | ||

| A4-6210 | 15W | 600MHz | A4-5000 | 15W | 500MHz | 20% | ||

| E2-6110 | 15W | 500MHz | E2-3000/E1-2500 | 15W | 450/400MHz | 11%/25% | ||

| E1-6010 | 10W | 350MHz | E1-2100 | 9W | 300MHz | 16% | ||

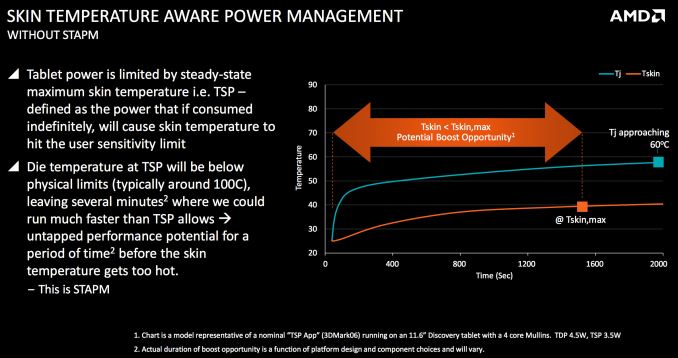

How can AMD hit significantly higher frequencies without a substantial architecture change or new process node? By raising the max thermal operating point of the silicon. Similar to what Intel discovered in architecting its Bay Trail silicon, AMD realized that in ultra portable form factors it would run into a chassis temperature limit before it ever reached the maximum operating temperature of its silicon.

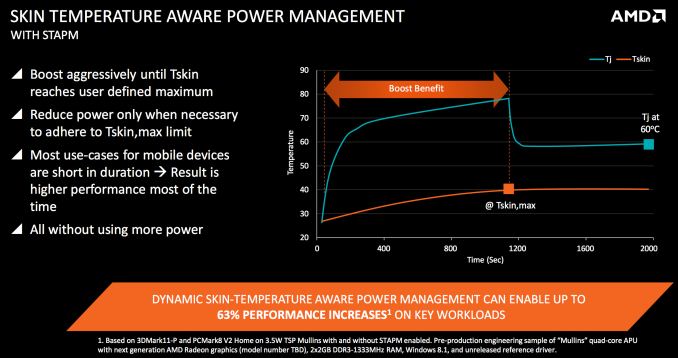

Previously once the silicon temperature hit 60C, AMD would cap max CPU/GPU frequency. However what really matters isn’t if the silicon is running warm but rather if the chassis is running too warm. With Beema and Mullins, AMD increases the silicon temperature limit to around 100C (still within physical limits) but instead relies on the surface temperature of the device to determine when to throttle back the CPU/GPU. In AMD’s own words, this allows the SoC to run at a much higher frequency for up to several minutes before having to scale back down. As long as the physical limits of the die aren’t exceeded, the design remains just as safe as before, but you get better performance.

The real trick is that AMD is able to enable this new chassis temperature governed boost (called Skin Temperature Aware Power Management - STAPM) without requiring any additional sensors or hardware from the OEM. What AMD does instead is gives the OEM tools to properly map SoC temperature to chassis skin temperature. My guess is the OEM runs a set workload, measuring external chassis temperature all while correlating that data with SoC temperature. This mapping will vary on a device by device basis, and obviously won’t be as accurate as having a thermal sensor on the chassis itself, but it’s good enough to get the job done.

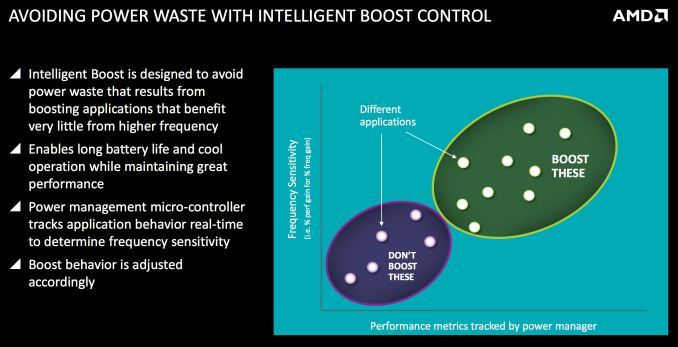

AMD claims it’s intelligent about when to boost. The updated power management unit looks at the response to frequency scaling of a given workload and will only boost when the workload will actually benefit from being boosted. This evaluation happens at the hardware instruction level and not at the OS/software layer.

The Lineup

With the exception of compressing the Kabini family into four parts instead of five, AMD kept the same number of SKUs as last year but obviously with updated specs with Beema and Mullins:

| AMD Mullins vs. Temash APUs | |||||||||||

| Model | Radeon Brand | SDP | TDP | CPU Cores | CPU Clock Speed (Max) | L2 Cache | Radeon Cores | GPU Clock Speed (Max) | DDR3 Speed (Max) | ||

| A10 Micro-6700T | R6 | 2.8W | 4.5W | 4 | 2.2GHz | 2MB | 128 | 500MHz | 1333 | ||

| A4 Micro-6400T | R3 | 2.8W | 4.5W | 4 | 1.6GHz | 2MB | 128 | 350MHz | 1333 | ||

| E1 Micro-6200T | R2 | 2.8W | 3.95W | 2 | 1.4GHz | 1MB | 128 | 300MHz | 1066 | ||

| A6-1450 | HD 8250 | 8W | 4 | 1.4GHz | 2MB | 128 | 400MHz | 1066 | |||

| A4-1250 | HD 8210 | 9W | 2 | 1.0GHz | 1MB | 128 | 300MHz | 1333 | |||

| A4-1200 | HD 8180 | 3.9W | 2 | 1.0GHz | 1MB | 128 | 225MHz | 1066 | |||

The Mullins parts get a Micro prefix in front of their model number, implying the SoC's tablet-friendliness. AMD also supplies both TDP and Scenario Design Power (SDP) values for Mullins SoCs, similar to what Intel does with Bay Trail. The latter uses more tablet-like workloads (read: lighter weight) while determining SoC power.

With the exception of the entry level E1 Micro-6200T, TDPs go down substantially with Mullins vs. Temash. Cache sizes and GPU core count remain unchanged, but CPU frequencies and max DRAM frequency supported goes up in many cases.

| AMD Beema vs. Kabini APUs | |||||||||||

| Model | Radeon Brand | SDP | TDP | CPU Cores | CPU Clock Speed (Max) | L2 Cache | Radeon Cores | GPU Clock Speed (Max) | DDR3 Speed (Max) | ||

| A6-6310 | R4 | 15W | 4 | 2.4GHz | 2MB | 128 | 800MHz | 1866 | |||

| A4-6210 | R3 | 15W | 4 | 1.8GHz | 2MB | 128 | 600MHz | 1600 | |||

| E2-6110 | R2 | 15W | 4 | 1.5GHz | 2MB | 128 | 500MHz | 1600 | |||

| E1-6010 | R2 | 10W | 2 | 1.35GHz | 1MB | 128 | 350MHz | 1333 | |||

| A6-5200 | HD 8400 | 25W | 4 | 2.0GHz | 2MB | 128 | 600MHz | 1600 | |||

| A4-5000 | HD 8330 | 15W | 4 | 1.5GHz | 2MB | 128 | 500MHz | 1600 | |||

| E2-3000 | HD 8280 | 15W | 2 | 1.65GHz | 1MB | 128 | 450MHz | 1600 | |||

| E1-2500 | HD 8240 | 15W | 2 | 1.4GHz | 1MB | 128 | 400MHz | 1333 | |||

| E1-2100 | HD 8210 | 9W | 2 | 1.0GHz | 1MB | 128 | 300MHz | 1333 | |||

Beema sees the end of the lone 25W TDP for Kabini, everything is now at 15W or less. The lowest end Beema carries a slightly higher TDP than the entry level Kabini, but otherwise there's more performance at the same TDP across the board. Beema parts don't come with an SDP rating as they're designed for use in more traditional ultrathin notebook PC form factors (presumably running more traditional, read: heavier, workloads).

TrustZone

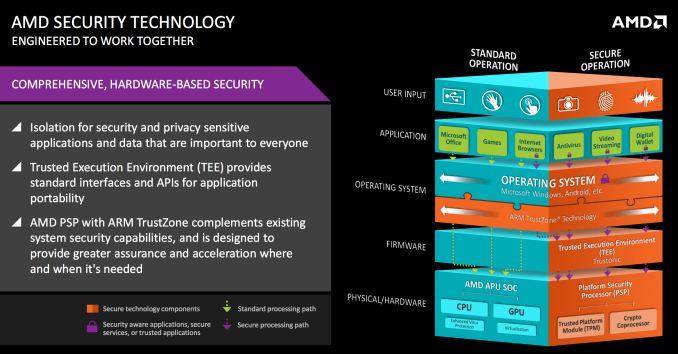

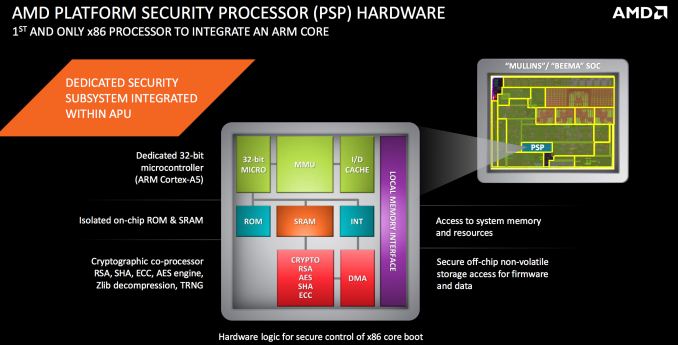

In 2012 AMD announced that it had signed a license agreement with ARM. Although we’ve since seen AMD announce ARM based Opteron silicon, back then the only official commitment was to ship an x86 SoC in 2013 with an integrated ARM Cortex A5 for TrustZone execution. AMD needed a hardware security platform on its SoCs to remain competitive, and it didn’t have one of its own (Intel’s TXT is proprietary and not a part of what’s licensed to AMD) so ARM’s TrustZone technology was an easy target. To support TrustZone you need an ARM core, and thus AMD committed to integrating a Cortex A5 as a dedicated security processor on some of its 2013 APUs.

Indeed both Kabini and Temash had a Cortex A5 on die, it was simply never enabled due to time constraints. With Beema and Mullins the core is fully functional in what AMD is calling its Platform Security Processor (PSP). AMD will likely publish guidelines on how developers can access and use the PSP, and I’d also expect to see it make its way into other AMD APUs moving forward.

82 Comments

View All Comments

Nintendo Maniac 64 - Tuesday, April 29, 2014 - link

Hmmm, sounds like the AMD equivalent of an Intel "tick", especially considering that the IPC between Puma+ and Jaguar is unchanged.Interestingly enough, this would mean that the PS4 and Xbone could use Puma+ cores in the future (with turbo disabled obviously).

nevertell - Tuesday, April 29, 2014 - link

Why would they need to disable turbo? I believe nobody is hitting the CPU performance limits just to have a fps limit or rely on the raw performance for timing, whereas this could improve some load times or improve performance during context switching.mwarner1 - Tuesday, April 29, 2014 - link

Consoles have fixed performance hardware to prevent games & applications performing differently on different hardware revisions. If you bought a PS4 today and then next week a new version was released (but likely not announced) that made games smoother / more playable then you would have the right to be annoyed.Havor - Tuesday, April 29, 2014 - link

No the main reason for fixed performance hardware is that developers dont have too add code or scalable textures to adjust or performance differences.And thus they have a more efficient single spec code, that dose not have to adjust to hardware spec.

nathanddrews - Tuesday, April 29, 2014 - link

Yeah, I highly doubt they'll switch architectures since it has never happened before. Power savings and console redesigns come from shrinks and on-die packaging.Samus - Wednesday, April 30, 2014 - link

There's nothing stopping Sony or Microsoft from launching a "performance edition" PS4 or XBOX One with a hardware bump that simply added antialiasing, etc, to games.This has already been done over the years with Nintendo offering the 4MB RAMBUS upgrade for the N64, and various performance storage options for XBOX 360/PS3 to assist load times of disc-based games. The SSD-edition of the 360 can load games/levels virtually instantly compared to running from disc or disk.

mfoley93 - Wednesday, April 30, 2014 - link

These aren't really higher performance though, just lower power. They could just lower the clock to offset whatever slight performance gains there are to equal the launch products.nathanddrews - Wednesday, April 30, 2014 - link

1. When Microsoft or Sony want to increase performance, they only do so via software updates that don't destabilize the platform as a whole. Neither can afford to break millions of consoles with a bad update or segregate the community into two camps. On the other hand, if developers of individual games find a way to improve framerates or AA, they can submit updates for download - but only after it is tested by the console manufacturers.2. I have the 4MB upgrade for my N64, only TWO games required it, a very small percentage of N64 games supported it, and even fewer truly benefited from it. It's mild success was due entirely to ZMM, DK, and PD, but Nintendo hasn't tried anything like it since. (Lest we forget the 64DD...)

3. Only Sony lets you install any drive you want. Most reviews from those that have upgraded to SSDs say it just isn't worth it. It's a consumer option, not something Sony changes at the platform level. The games still run at the same speed with the same textures.

4. There is no consumer "SSD Edition" Xbox 360 and they won't let you install one (officially). Are you referring to the 4GB Slim? That's not an SSD and most 360 games are too big to install onto it.

5. I have yet to see a console SSD upgrade result in anything instantaneously... except regret. :D

Kevin G - Wednesday, April 30, 2014 - link

Consoles are to be 'fixed spec' so that game developers know exactly what to expect in terms of hardware. The lone exception has been storage capacity. The N64 memory expansion is an excellent example of why developers aim for the lower guaranteed spec: only three games required it with a handful of games that'd use it if present.Both MS and Sony could come out with a hardware revision that does a bit more outside of gaming without impacting game developers. For example, MS could release an Xbox One with a digital tuner + DVR hardware. Such a change would have no impact to the gaming side of things. Ditto if MS or Sony were to add backwards compatibility via hardware: it'd be unavailable to use in an Xbox One or PS4 game.

Arbee - Thursday, May 1, 2014 - link

There was a late revision of the original PlayStation where the GPU got significantly faster for some operations, which resulted in higher frame rates in some games. (This was when the debug units switched from blue to green, in order to differentiate).