Intel: By 2020 The Size of Meaningful Compute Approaches Zero

by Anand Lal Shimpi on September 10, 2012 1:29 PM EST- Posted in

- CPUs

- Intel

- Trade Shows

- IDF 2012

Intel Labs is a super interesting arm of the company, responsible for developing technologies that would potentially go into products 5 - 10 years down the road. It's from the labs that we got things like BBUL packaging (still not deployed but neat nonetheless) and the first 10GHz x86 ALUs.

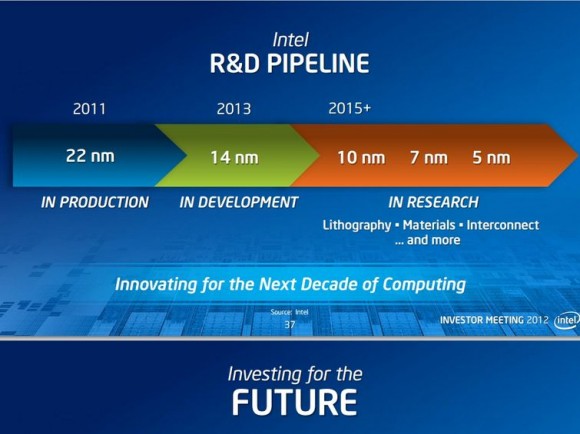

Today, on the day before IDF, Intel put together a collection of demos to give us insight into what the labs folks are working on. We're still an hour away from actual demos but Intel did share a super interesting slide, pictured above.

Moore's Law and the (almost) ever shrinking transistor give us one of two things: more complexity/performance/features in a given die area, or the same performance in a steadily decreasing die area.

Exploiting the latter benefit, we have the realization above. By 2020, which assuming a 2 year cadence between process nodes means we're talking about silicon built on a 5nm process, Intel expects that the size of "meaningful compute" approaches zero. It's not totally clear what Intel defines as meaningful compute, but if you keep in mind that a single AMD Jaguar core measures < 3mm^2 at 28nm, it's not a stretch to believe that we'd have quite a bit of compute available nearly for free at 5nm. Assuming perfect scaling (not going to happen), a 100mm^2 die at 22nm would measure only 6.25mm^2 at 5nm. Even if you don't get perfect scaling, Moore's Law can give us the performance we get in a smartphone today in a size that's small enough and cheap enough to make that we can integrate it anywhere (smart-tables anyone?).

The idea of ubiquitous compute has been around for quite a while, but it's still mindblowing to think about what can happen once meaningful compute is really almost free. Remember that there's a direct relationship between the size of silicon and its cost. Today's 80 - 120mm^2 smartphone SoCs cost anywhere from $10 - $30 to integrate into a phone. If silicon with that level of complexity can be reduced in size to the point where we're looking at low single digit costs, the potential impact to new markets will be huge.

We saw what affordable compute did for smartphones, the next question is what even more affordable compute can do for other, undiscovered markets. A while ago I heard someone smart tell me that at some point displays become cheap enough where many surfaces we take for granted, start integrating them (e.g. bathroom mirrors that double as displays). Combine that with cheap compute that we can integrate everywhere as well and we have a recipe for the future.

37 Comments

View All Comments

Zingam - Monday, September 10, 2012 - link

So if 14 nm comes on time, what then? It appears that development will slow to a crawl slowly.I have not idea how will it impact CPU development but shrinking by just 2-4 nm every 2-3 years for the next 10 years won't bring much progress.

ezorb - Monday, September 10, 2012 - link

Think in terms of percentage rather then absolute numbers, this will serve you better in most things in life.menting - Monday, September 10, 2012 - link

i would think that shrinking 2-4nm every 2-3 years would be a huge progress, because the percentage shrink will be greater and greater, and we're already hitting the limits of lithography.Death666Angel - Monday, September 10, 2012 - link

Don't look at the absolute numbers, put them in relation to one another and the 2-4nm jumps look quite good.130 nm was introduced in 2000 and in 2012 we had 32 nm (going by a table on Wikipedia): 4.0625:1

We have 22 nm in 2012 and will have 5 nm in 2022 (approx.): 4.4:1

Nothing wrong with that in my book. :-)

bbordwell - Monday, September 10, 2012 - link

It would be the same level of progress as we have seen over the past decade, process shrinks have always brought about a 30% decrease in die size for the same chip, this is what Intel is still aiming for in its future processes. The only question is whether or not Intel will be able to keep up their time frame for shrinks as they are really going to start pushing the laws of physics soon.Arbie - Tuesday, September 11, 2012 - link

"... they are really going to start pushing the laws of physics soon."

I remember hearing the same thing in the mid-1980s. True then, true now.

JKflipflop98 - Tuesday, September 11, 2012 - link

We've been pushing the laws of physics for years and years. This is no different.Jaybus - Thursday, September 13, 2012 - link

It is different. At 14 nm, fewer than 30 Si atoms span the channel and quantum tunneling is significant. It will be far worse at each successive node. Ultimately, at about 2.5 nm, the channel will be spanned by just 5 Si atoms and the Heisenberg uncertainty principle tells us that it will no longer be possible to know that an electron is contained within a single trace. So I think we are witnessing the end of Moore's law. First, the rate at which the number of transistors doubles will decrease over the next 10 years and then it will hit zero. Why do you think Intel, IBM, and other are investing in research into moving from electronics to photonics?sefsefsefsef - Monday, September 10, 2012 - link

Each of those jumps in the figure above reduces the gate size by ~30%, which is a linear measurement. The trick is that CPUs are 2-dimensional designs (ignore the 3rd dimension for now). This means you get that 30% reduction in each of 2 dimensions (because they aren't only reducing gate length when they make a smaller node), which means that your transistor density (in terms of transistors/area) approximately doubles each time you reduce transistor gate length by ~30%.Arbie - Tuesday, September 11, 2012 - link

I agree. We're talking nanometers for chrissake. That's so small you can't even see them.